Multi-Cloud Machine Learning

Executive Summary

80% of companies rely on hybrid cloud for their IT infrastructure strategy. Machine learning initiatives are often the exception to the rule and companies are focusing on a single cloud instead. This approach has major drawbacks: cost and vendor lock-in.

We have identified four paths which companies often explore to move away from single cloud to hybrid cloud in their ML initiatives. These paths come with their own pros and cons depending on which stakeholders' perspective you evaluate.

Multi-Cloud is the Default (for Traditional Software)

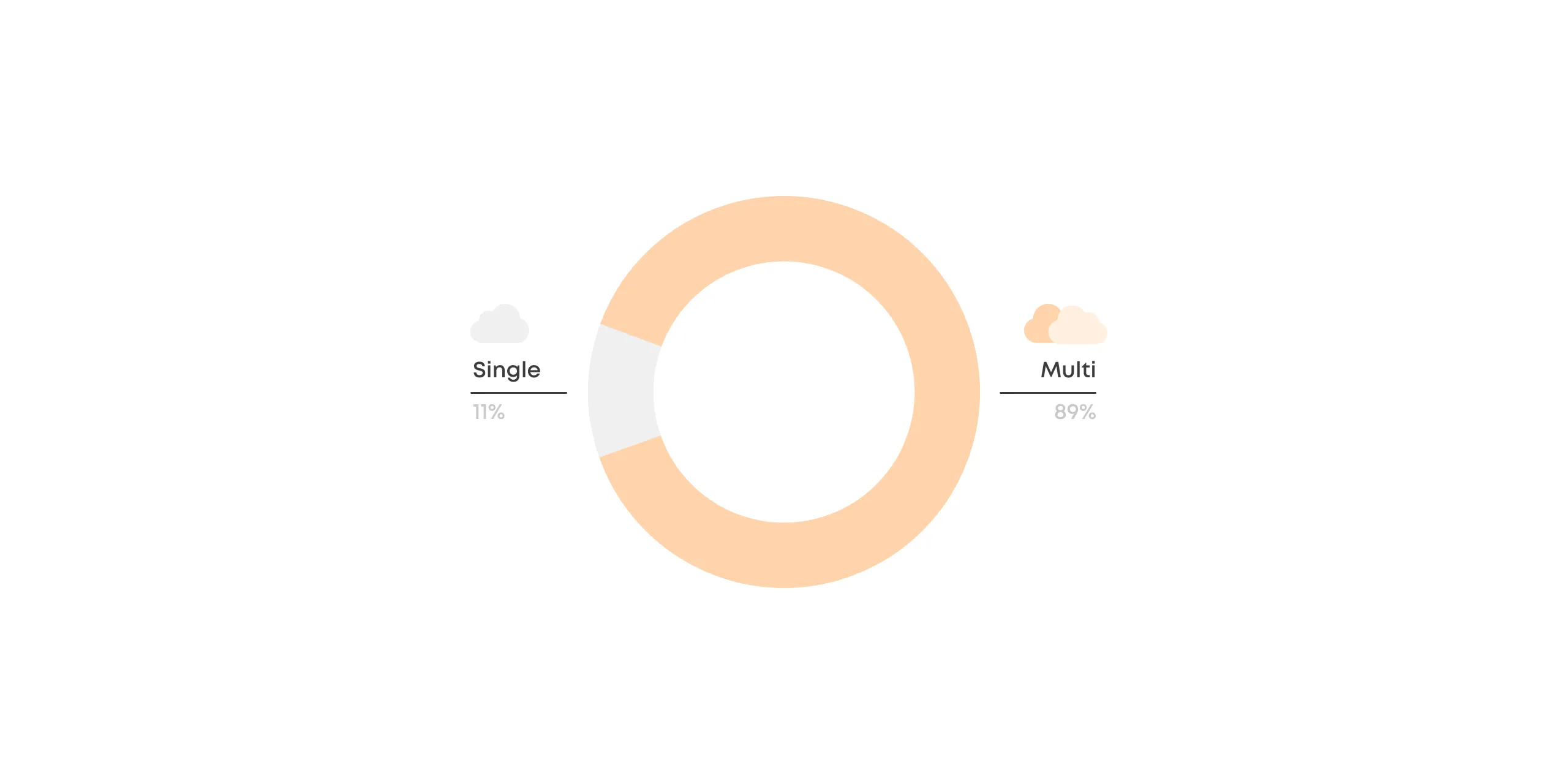

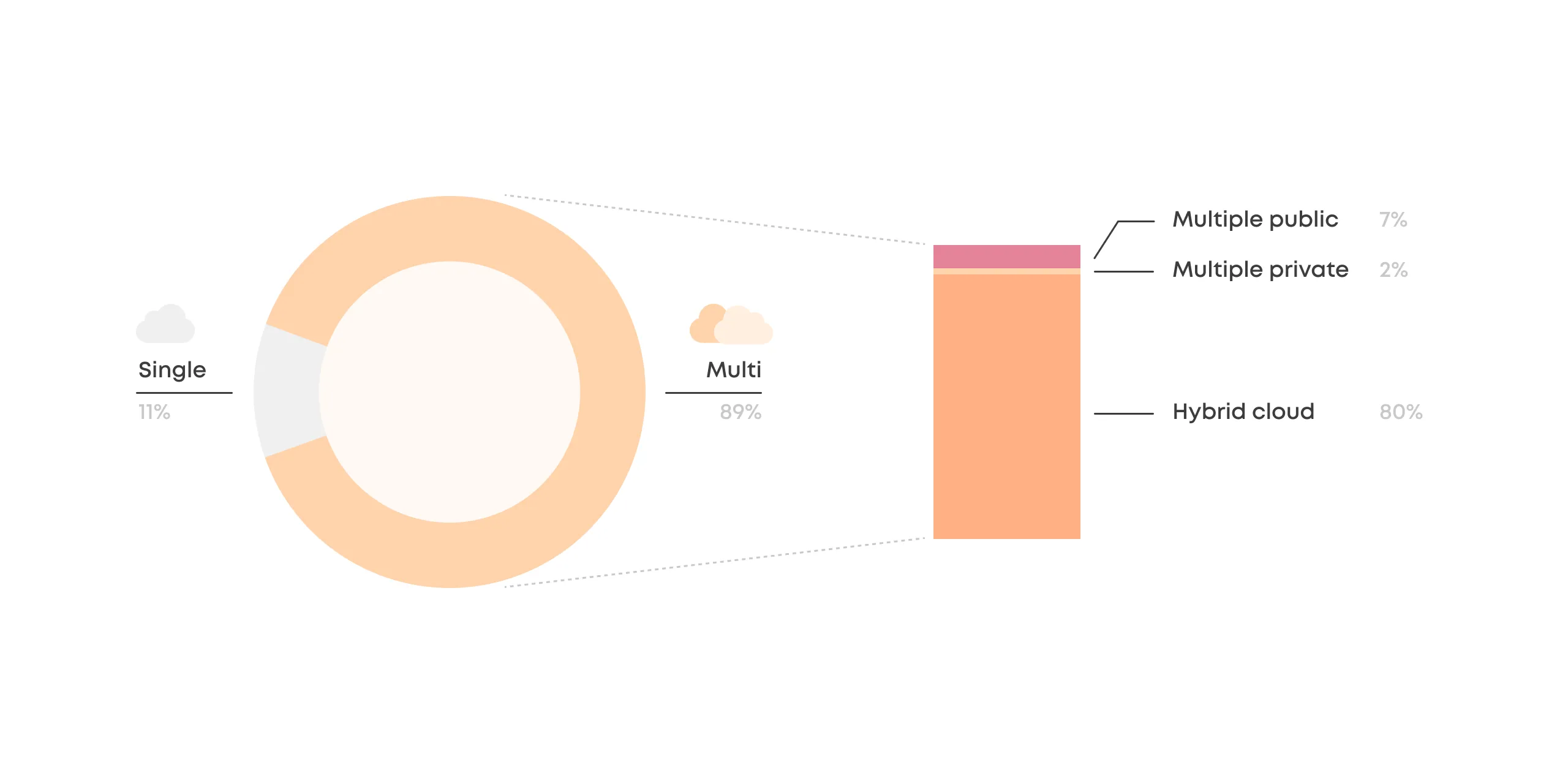

The State of the Cloud Report 2022 from Flexera reveals that out of all companies using the cloud, 89% have a multi-cloud strategy meaning they use more than one computing provider.

While multi-cloud is the explicit default as a company-wide strategy, many organizations are not exercising it within their ML initiatives. In fact, multi-cloud is often an afterthought when it comes to ML infrastructure.

There is a reason why ML is lagging, but we'll get to that later.

First, let's take a closer look at how the cloud pie is sliced.

The Multi-Cloud is a Hybrid Cloud

Companies with a multi-cloud strategy predominantly use both public and private clouds simultaneously. This strategy is called the hybrid cloud, and according to Flexera's report, 80% of all companies utilize the hybrid cloud strategy.

This makes sense as the hybrid cloud offers the best of both worlds:

- Flexibility of the public cloud.

- Security and cost optimization of the private cloud.

Multiple public clouds are used by 7% of companies, and while they offer some diversification and cost leverage, it's far from the benefits of the hybrid cloud strategy.

The Hybrid Cloud ML is on the Rise

A public cloud is ideal for workloads that need to scale flexibly without downtime. Plenty of ML workloads fit this description, especially when it comes to using models for real-time inference.

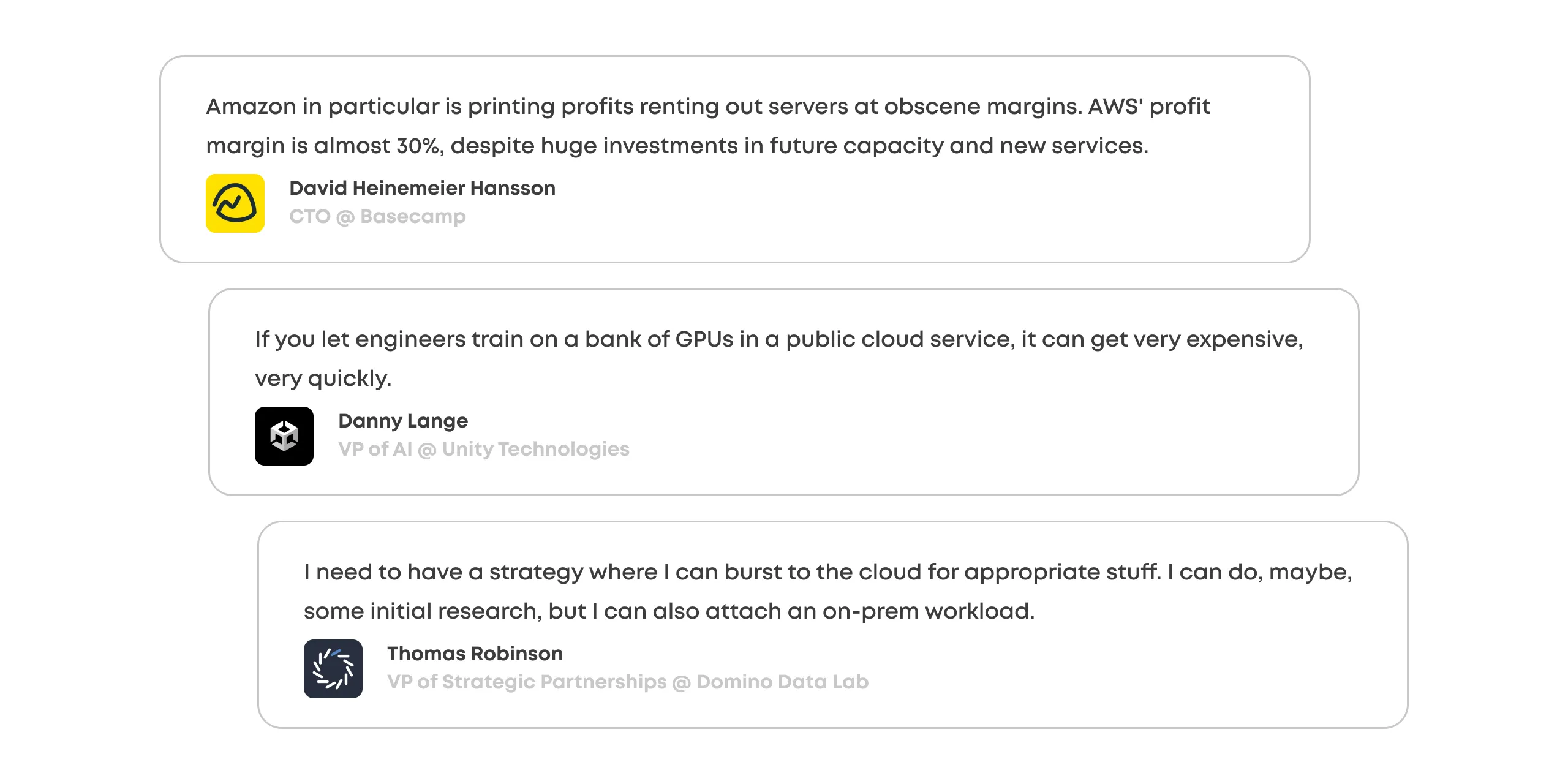

The flexibility of the public cloud does come with its drawbacks:

- Huge cost margins, especially for ML-specific computation (Sagemaker)

- Not compatible with the industry regulations

- Vendor lock-in

There's a large part of ML where public cloud's flexibility isn't a requirement and workloads aren't as time-sensitive, including model training and batch inference.

While the companies have already shifted to a hybrid cloud strategy, why is it not exercised in ML despite the apparent opportunities?

The answer is simple: ML is more complicated than traditional software development.

Let's go deeper to understand why.

Quotes by David Heinemeier Hansson, Danny Lange & Thomas Robinson

From Single to Multi-Cloud

Most ML teams are in a status quo of a single cloud provider and often utilize the ML platform native to that cloud.

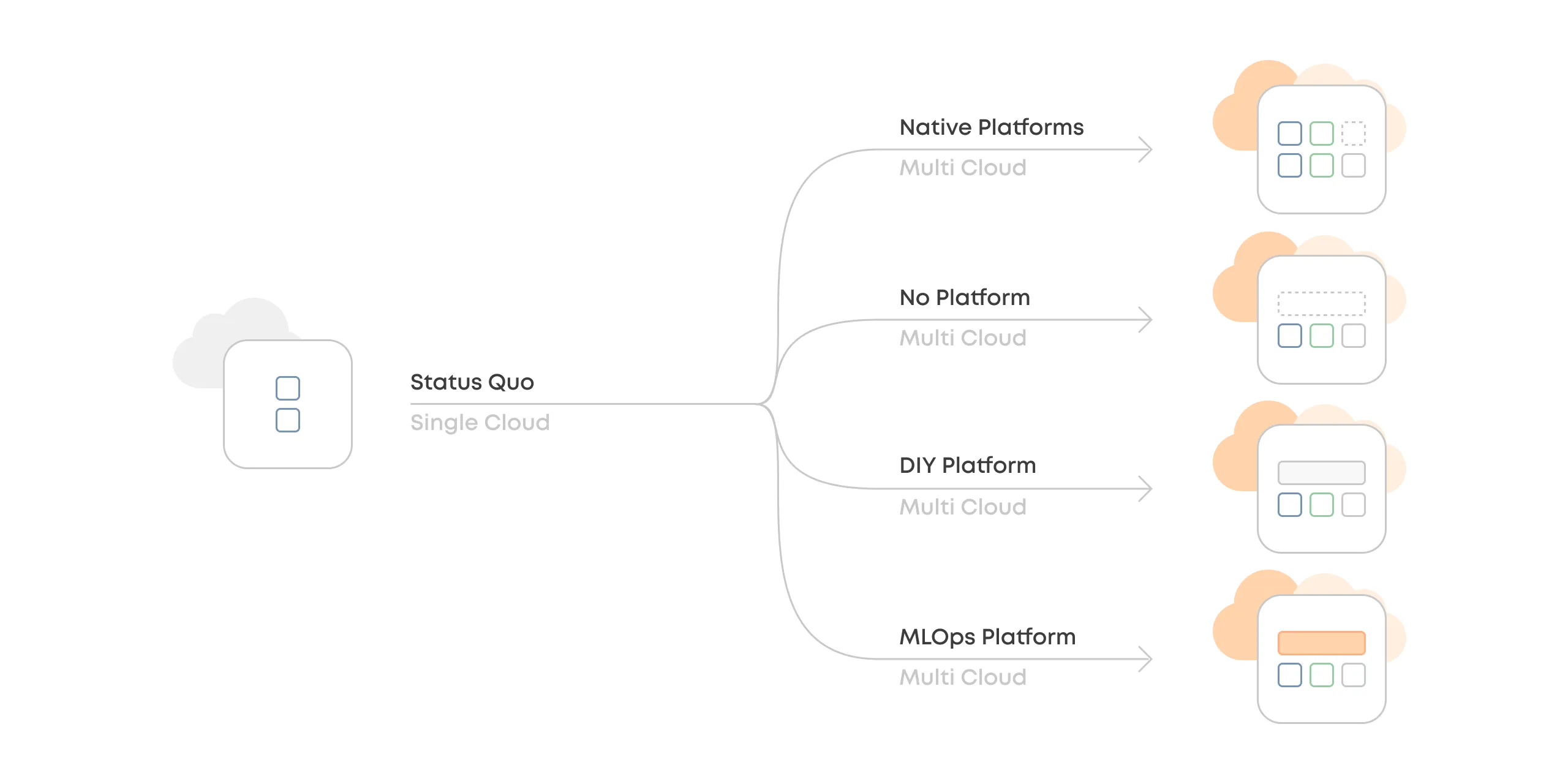

We've identified four different paths that companies tend to explore when they migrate from single-cloud ML to multi-cloud ML:

- Native Platforms

- No Platform

- DIY Platform

- MLOps Platform

Each option has different tradeoffs and may be more or less ideal depending on the stakeholder.

Let's look at them one-by-one.

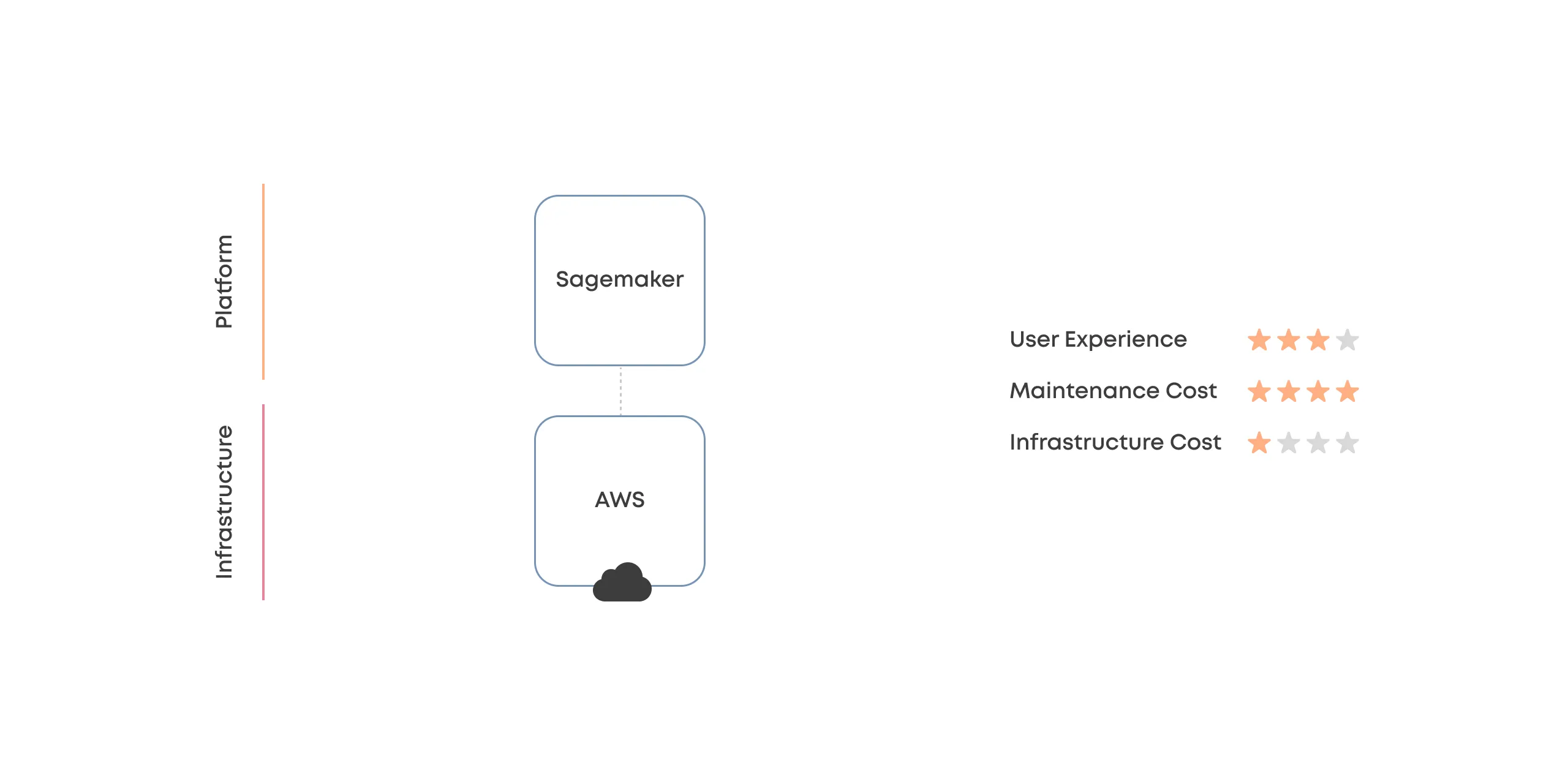

Status Quo

Single Public + Cloud Vendor Platform

Status quo is a single cloud vendor with their flavor of the ML platform, like Sagemaker.

This is where many ML practitioners are stuck today in local maxima.

The most significant drawback is that teams cannot optimize for cost, even in use cases where a public cloud doesn't inherently provide any additional value.

Perspectives

Manager: "The costs are too high, and there is absolutely nothing we can do about it."

IT: "We are happy! Everything is easy in our favorite cloud."

Data Scientist: "Most things are straightforward and simple, but the cloud vendors' platform is not the snappiest, and the libraries are sometimes lacking."

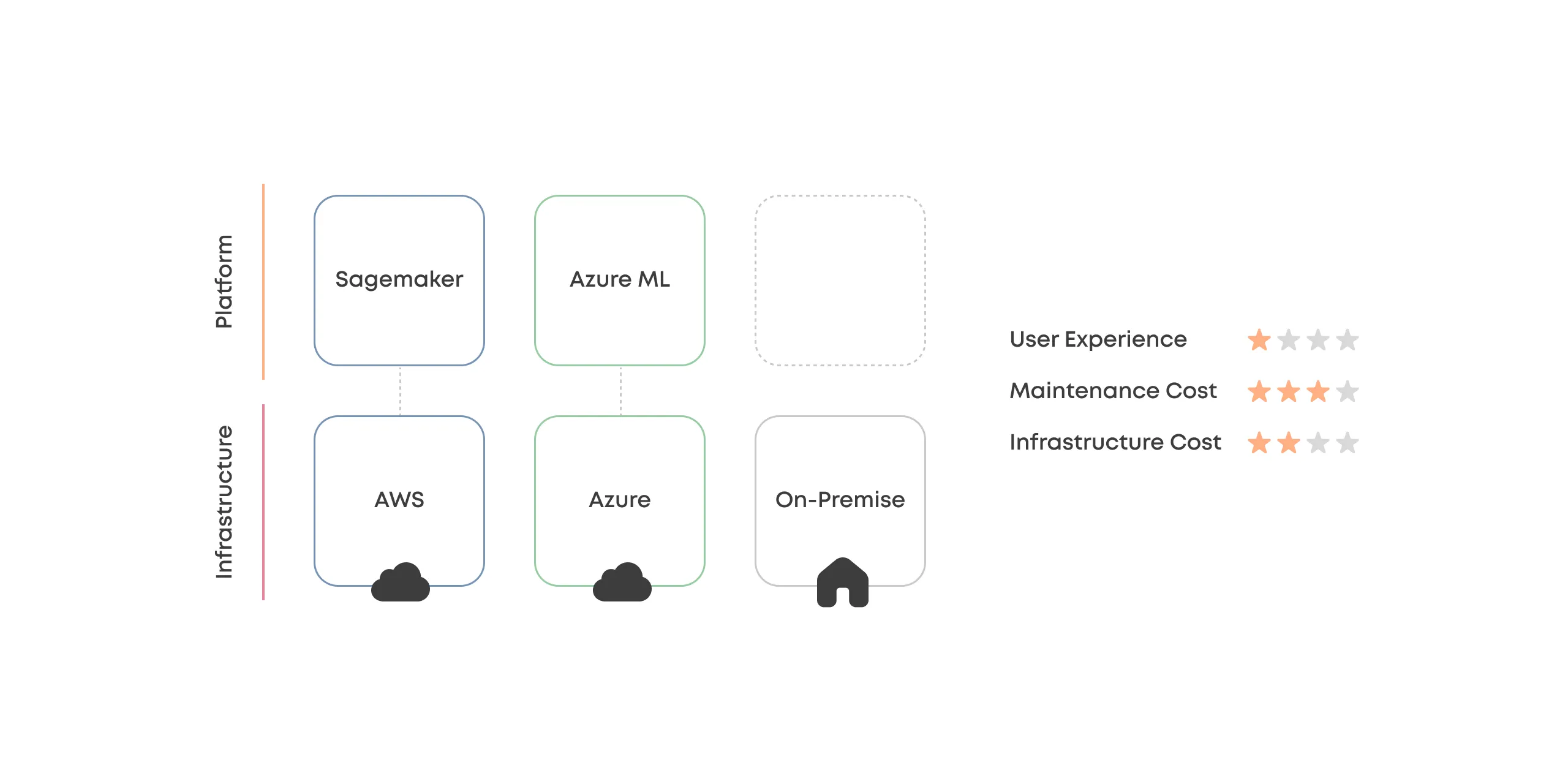

Option #1

Native Platforms

Using multiple cloud vendors with their multiple different ML platforms simultaneously.

It is common for ML consultancies to end up here, as the clients have varying cloud vendors.

This approach opens up a lot of possibilities but suffers from complexity.

Perspectives

Manager: "More cost optimization opportunities, but mostly in theory."

IT: "This is horror. We need to jump between multiple cloud vendors and their subtle differences."

Data Scientist: "I liked the simplicity of single cloud more."

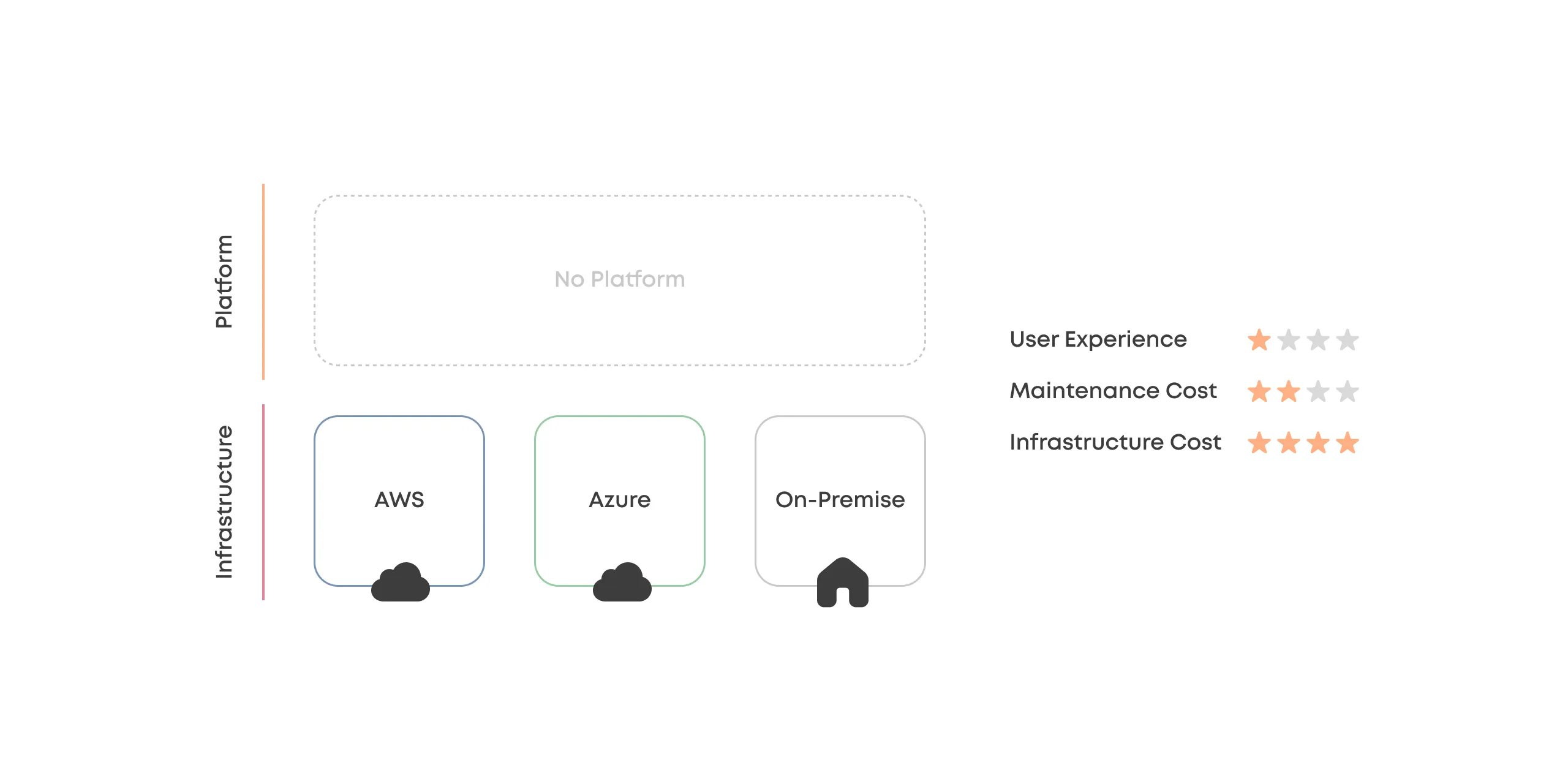

Option #2

No Platform

Tossing away the ML platforms and trying to use the hybrid cloud without abstraction layers.

While utilizing cloud and on-premise computing directly offers the most flexibility, it is impractical mainly due to the ineffective user experience for practitioners.

Perspectives

Manager: "I could optimize costs, but I have no centralized tools to do it."

IT: "Still horror. Need to manage multiple cloud vendors."

Data Scientist: "Impossible to get anything done as I'm constantly stuck with engineering."

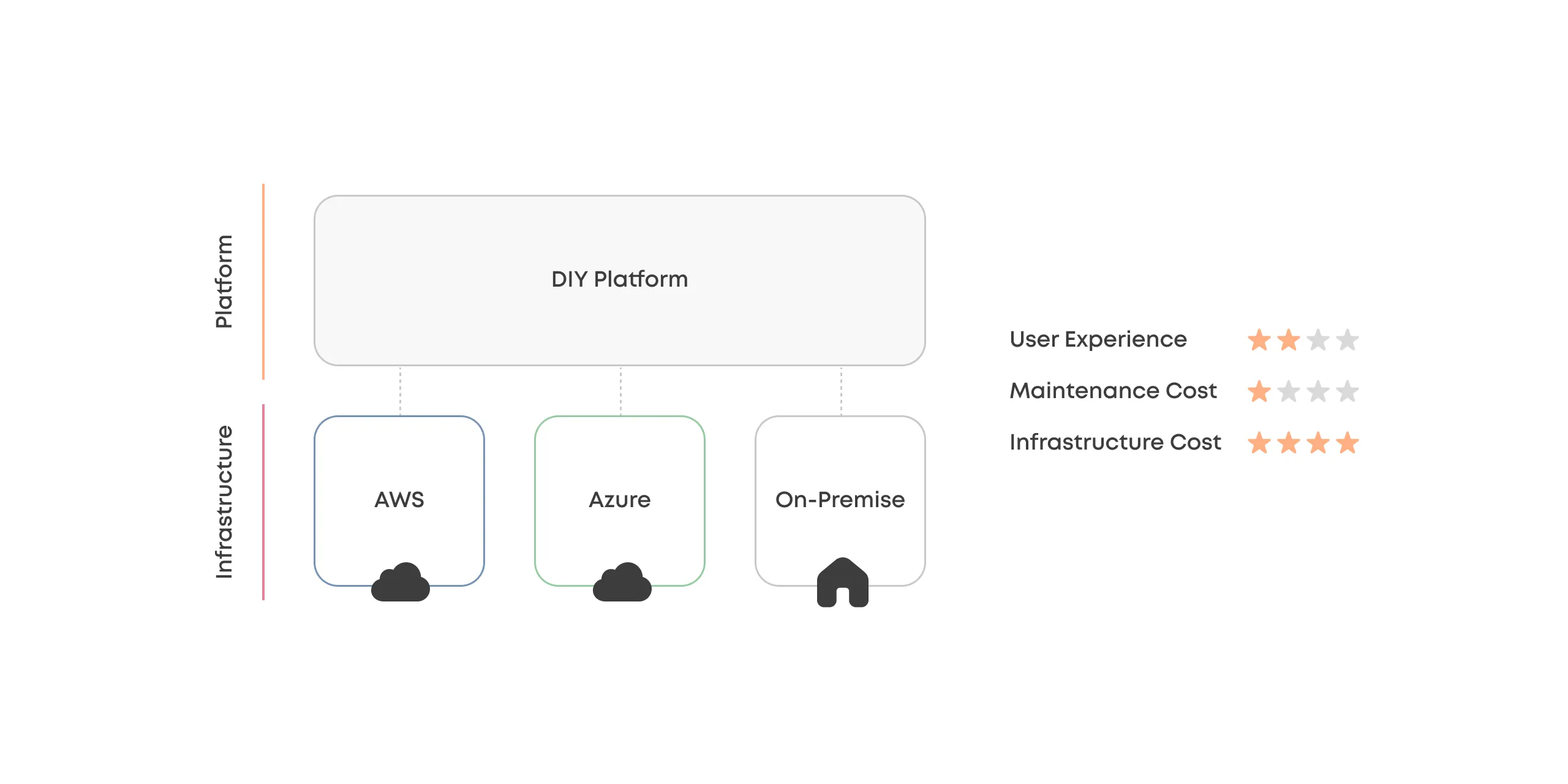

Option #3

DIY Platform

Building an in-house MLOps platform to manage it all is a common playbook in cutting-edge tech companies such as Uber, Netflix, Google, and Meta.

A DIY approach offers enormous flexibility at the expense of maintenance and development costs.

Even most enterprises lack the scale at which the maintenance and development costs can be justified for a non-core platform.

Perspectives

Manager: "I can finally monitor and optimize costs, but building our platform is slower and more expensive than we thought."

IT: "More separation between the data scientist and the engineering, but we are struggling with bugs."

Data Scientist: "It is nice to have our platform, which can be customized to our use cases, but it is unreliable and lacks features."

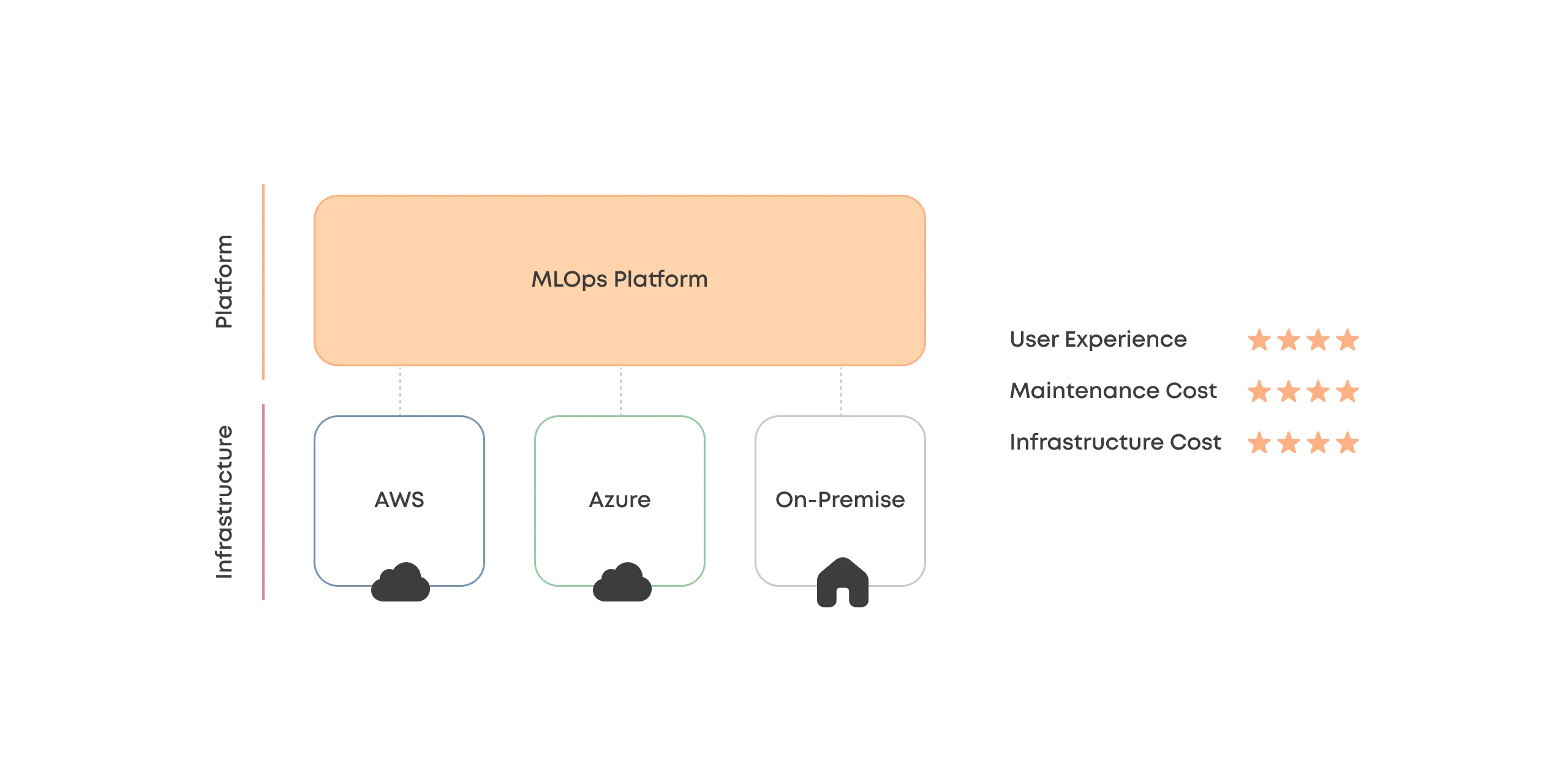

Option #4

Managed MLOps Platform

While hybrid cloud is necessary, it is very complicated and building an in-house MLOps platform is not core business for most companies.

The best option is managed MLOps platform (Valohai) as a unified abstraction layer between practitioners and their hybrid cloud infrastructure.

Perspectives

Manager: "I have a centralized place to optimize and monitor costs and projects. Loving it!"

IT: "We can manage all cloud resources from the same place, and the MLOps platform is reliable and extendable. Very nice!"

Data Scientist: "I don't need to worry about different clouds anymore. Everything works!"

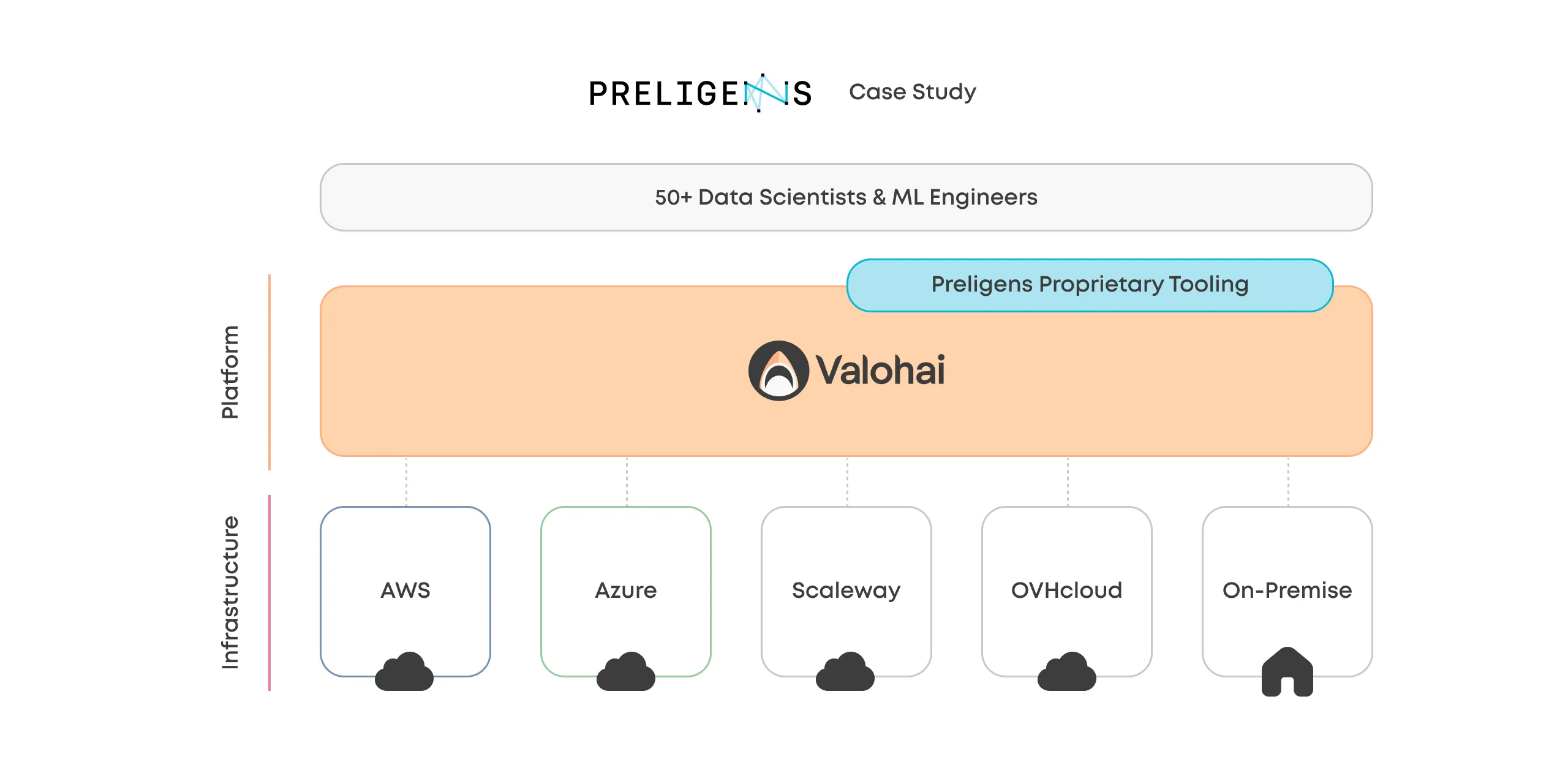

Preligens uses Valohai for hybrid cloud ML.

While many options exist for an ML multi-cloud strategy, most come short for the stakeholders.

Preligens is a company building software for analysts in the defense and intelligence space and Valohai MLOps platform functions as the infrastructure layer for their successful hybrid cloud strategy.

The alternative Preligens considered was the DIY path but found Valohai to be the more cost-effective and ideal solution:

Building a barebones infrastructure layer for our use case would have taken months, and that would just have been the beginning. The challenge is that with a self-managed platform, you need to build and maintain every new feature, while with Valohai, they come included.

Renaud Allioux Co-Founder & CTO, Preligens

Renaud Allioux Co-Founder & CTO, Preligens

With Valohai, Preligens can run ML workloads flexibly depending on regulatory requirements and cost-effectiveness.

Read more about Preligens' hybrid cloud approach and experience with Valohai.

More about Valohai

Valohai is the MLOps platform purpose-built for ML Pioneers, giving them everything they've been missing in one platform that just makes sense.