We’ve said it many times, 2021 is the year of MLOps.

Why? Many companies are finally at the point with machine learning that they’ll need a more systematic approach to developing and maintaining production models. When machine learning has proved its value, it’s no longer about pushing out a single version of a model but on developing a system to iterate continuously.

We’ve seen this with our users as well. They are automating away unnecessary parts of their work and, even more interestingly, building previously impossible products. For example, our customer Levity recently launched their AI tool for workflow automation, which under the hood trains a custom model as their end-user creates a new workflow.

In the past few months, we’ve rolled out three new features that highlight end-to-end automation on our platform:

-

Deployment nodes in pipelines

-

Pipeline scheduler

-

Model monitoring

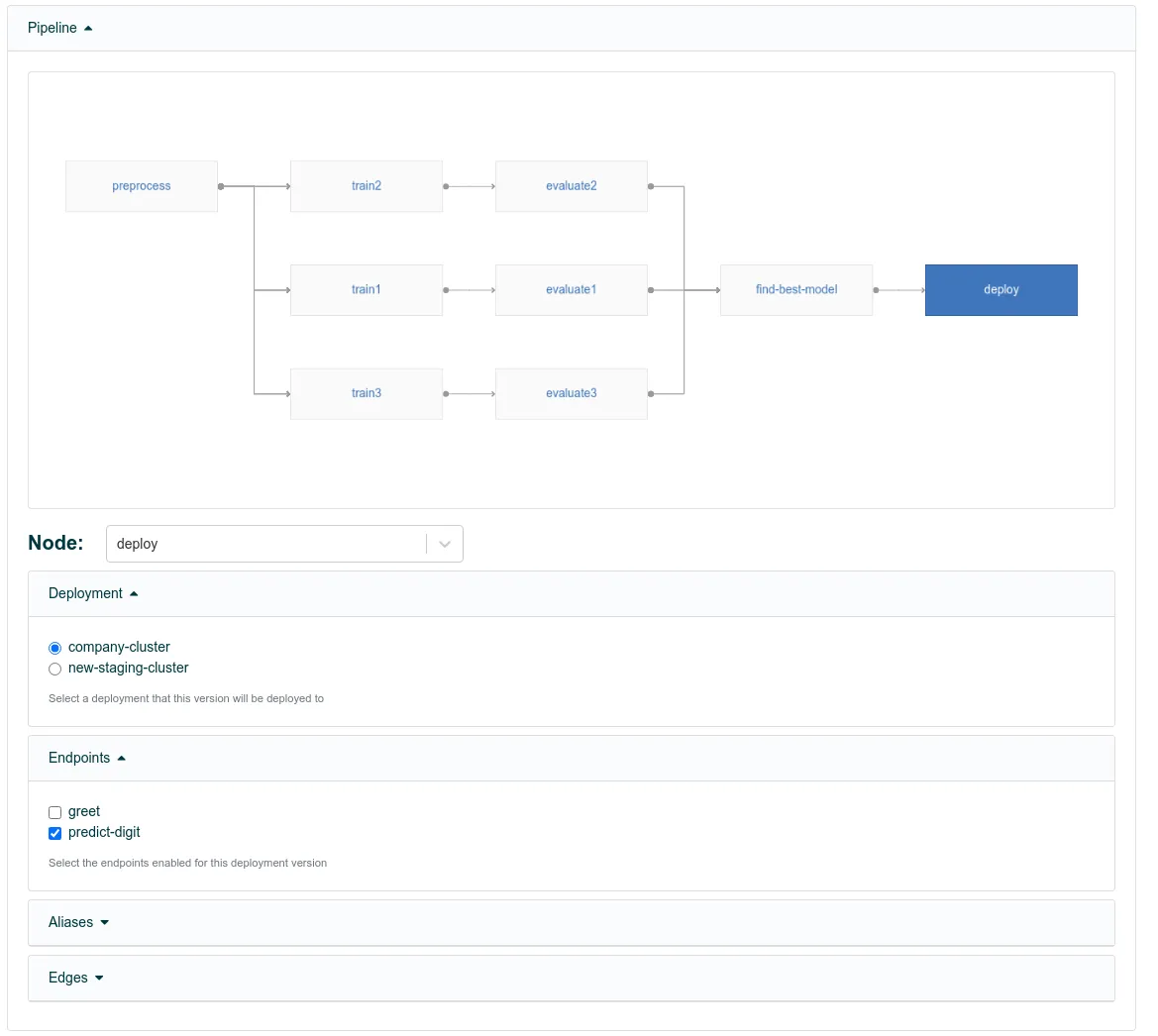

Deployment nodes in pipelines

The Valohai pipelines now feature a special node type that allows the deployment of a model.

After all the data wrangling, training, and testing, many ML pipelines’ logical last step is to deploy the model to production. Previously this was a manual step, but now Valohai has a special pipeline node type that deploys the model to the chosen endpoint.

The user adds a new node to the valohai.yaml, using the new type. The target deployment and endpoint is just a default, which can be dynamically changed for every pipeline execution.

nodes:

- name: deploy-to-production

type: deployment

deployment: my-production-cluster

endpoints:

- predict-digitThen the node needs to connect to the rest of the pipeline using a normal edge. Here we get the model.pb from the training node output and deploy it.

edges:

- [training.output.model.pb, deploy.file.predict-digit.model]Deployment nodes allow our clients to take models to production without any human intervention, enabling use cases such as on-demand custom models.

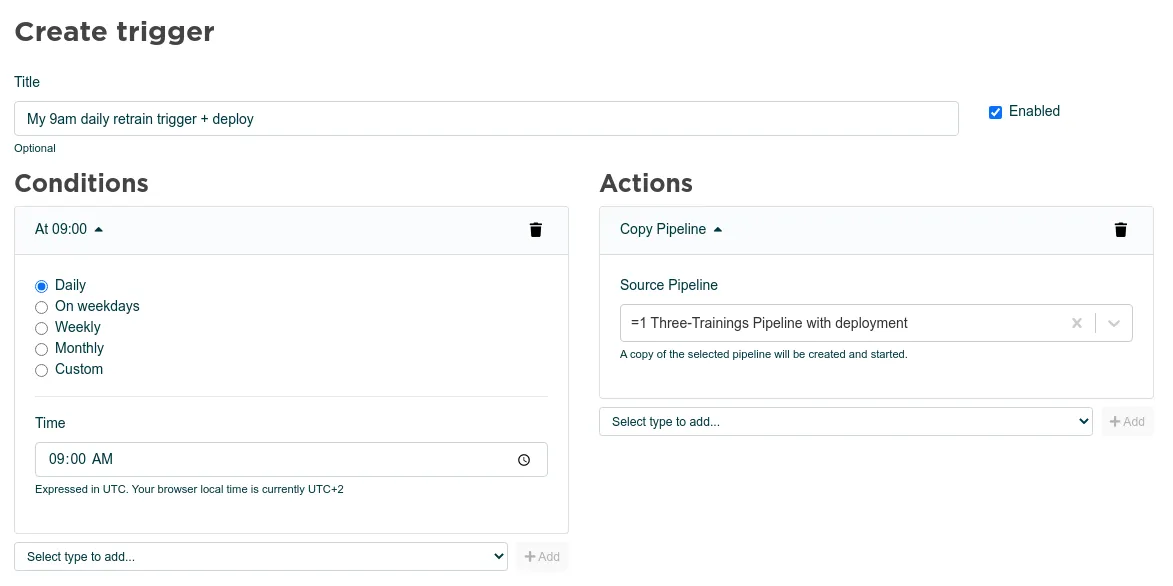

Pipeline scheduler

The Valohai pipelines can now be set to be triggered periodically.

Nobody wants to be in charge of mindlessly starting the same re-training pipeline every day. With Valohai triggers, you can automatically schedule your pipeline executions. In addition to our typical daily, weekly, and monthly presets, you can create a more custom schedule using the classic cron format. For example, "0 9 * * 1-2" would trigger a pipeline at 9 am on every Monday and Tuesday. You can also start multiple pipelines with the same trigger.

Time-based triggering was the most requested among our clients, but we see many opportunities to expand how pipelines can be automatically triggered. One clear future direction is to tie the pipeline scheduler to our next new feature, model monitoring.

Model monitoring

In the past six months, we’ve received a huge number of questions related to model monitoring. We agree that it’s an important topic to tackle to ensure the continued quality of production models. Valohai deployments are now complemented with the first version of model monitoring, allowing users to log and visualize any number of metrics from the incoming requests and served predictions.

Valohai deployment metadata monitoring is on par with the training metadata monitoring. Now any JSON printed by the deployment code is parsed by Valohai and can be inspected using the visual graph, just like for the training runs. Valohai uses ElasticSearch as a backend, which means that all the additional tooling of ElasticSearch is also at your disposal.

This printout for an endpoint request:

{"vh_metadata": {"best_guess": 0.89, "best_guess_probability": 0.89}}Allows you to visually track two metadata items, "best_guess" and "best_guess_probability" over time.

What’s next?

“The pipeline is the product, not the model.”

Pipelines are not just the core of any machine learning system; they are also the core of Valohai. Our focus is on building the best platform for end-to-end machine learning pipelines. We see that this will enable data scientists to create unique machine learning capabilities but not be bound to maintain them.

We would love to know more about what are the challenges you face with production machine learning and MLOps. Let us know below.