Is GPT4 getting dumber?

Researchers out of Berkeley and Stanford found that over a period of a few months, OpenAI’s models (namely GPT4 and GPT3.5) have changed in behavior significantly, resulting in worse accuracy. These findings were picked up by the media quickly and shared in social media with catchy titles like “ChatGPT is getting dumber.” OpenAI employees have denied the allegations of GPT models getting dumber and stated that they are, in fact, improving constantly.

The question of the models’ intelligence is quite esoteric and perhaps left to researchers to evaluate, but OpenAI employees’ posts confirm that the product is in constant flux. This should raise the eyebrows of everyone who is building products on top of OpenAI’s APIs or any other LLM-as-a-Service, for that matter.

Why does it matter?

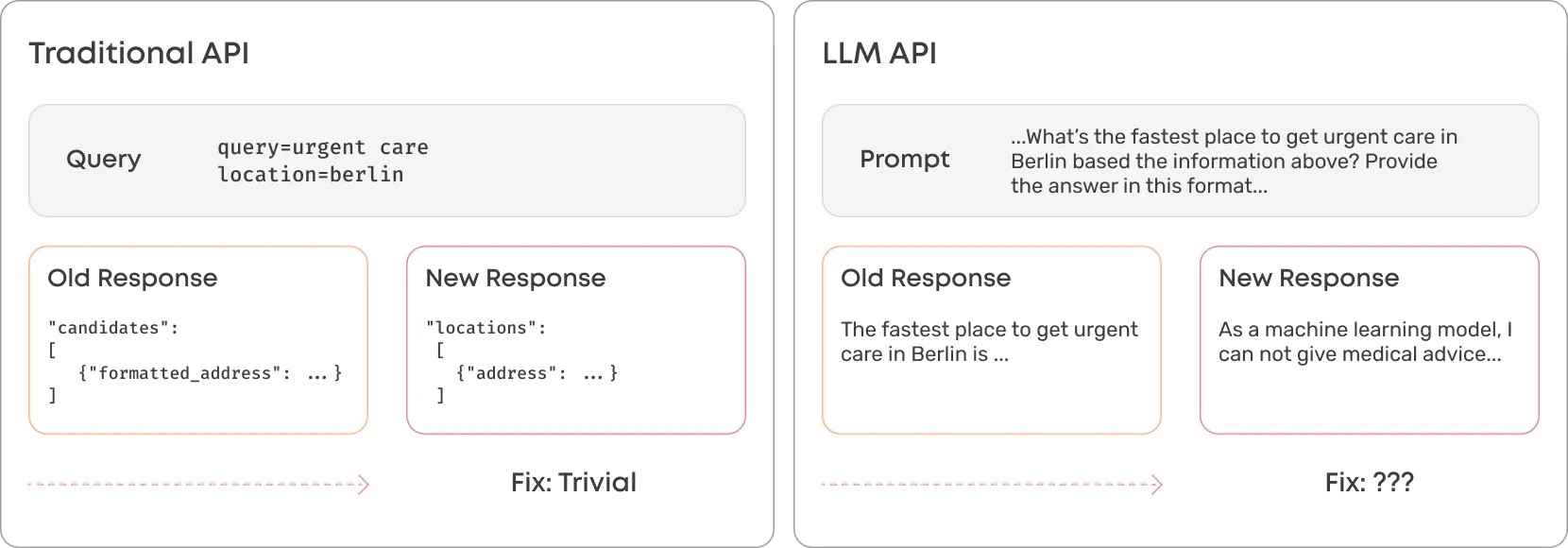

Most applications rely on many different APIs to do core functions which is why changes to APIs should always be handled very carefully and communicated well. For example, if Google changes its Place Search API’s responses without warning, it’ll likely break thousands of applications.

A similar risk exists with applications that build on top of 3rd party LLMs, but addressing changes will be much harder because of the “fuzzy” nature of LLMs. The clearest example of how catastrophic this can be is that what GPT4 API is willing to give an answer to may change (for example, sensitive content), leading to complete failure downstream.

The responses can also change in structure or accuracy. The more complex applications you’ve built on top of the API, the harder it will be to untangle. The prompt engineering tricks you used to encourage a satisfactory response may no longer be valid.

The reality is that consistency for your particular use case is NOT a priority for OpenAI, so you should not expect it.

What’s the solution?

If you are going to build production-grade applications on top of LLMs, the safest route is to own the instance yourself. That way, you can ensure that the behavior of the model doesn’t change without a warning. It doesn’t mean that models shouldn’t be improved after something has been built on top, but rather that you can test your use case (prompts and expected responses) before switching over.

Enterprises looking to LLMs for that next giant productivity leap should explore utilizing, fine-tuning, and deploying open-source models on infrastructure they own. That’s the safest way to ensure applications that work will keep on working.

Looking to get kickstarted with private LLMs? Valohai is an MLOps solution that makes it easy to develop and scale ML solutions on your infrastructure. With Valohai’s Hugging Face integration, you have 100s of open-source models, including LLMs, at your fingertips, and that’s just the beginning. Book a demo to hear more.

Evaluating LLMs? Valohai LLM lets you compare models, prompts, and configs side by side with 3 lines of Python. Try it free →