If machine learning is a team sport, like I so frequently hear, machine learning platforms must be the playing fields. And to up your machine learning game, you must have the proper environments to do it.

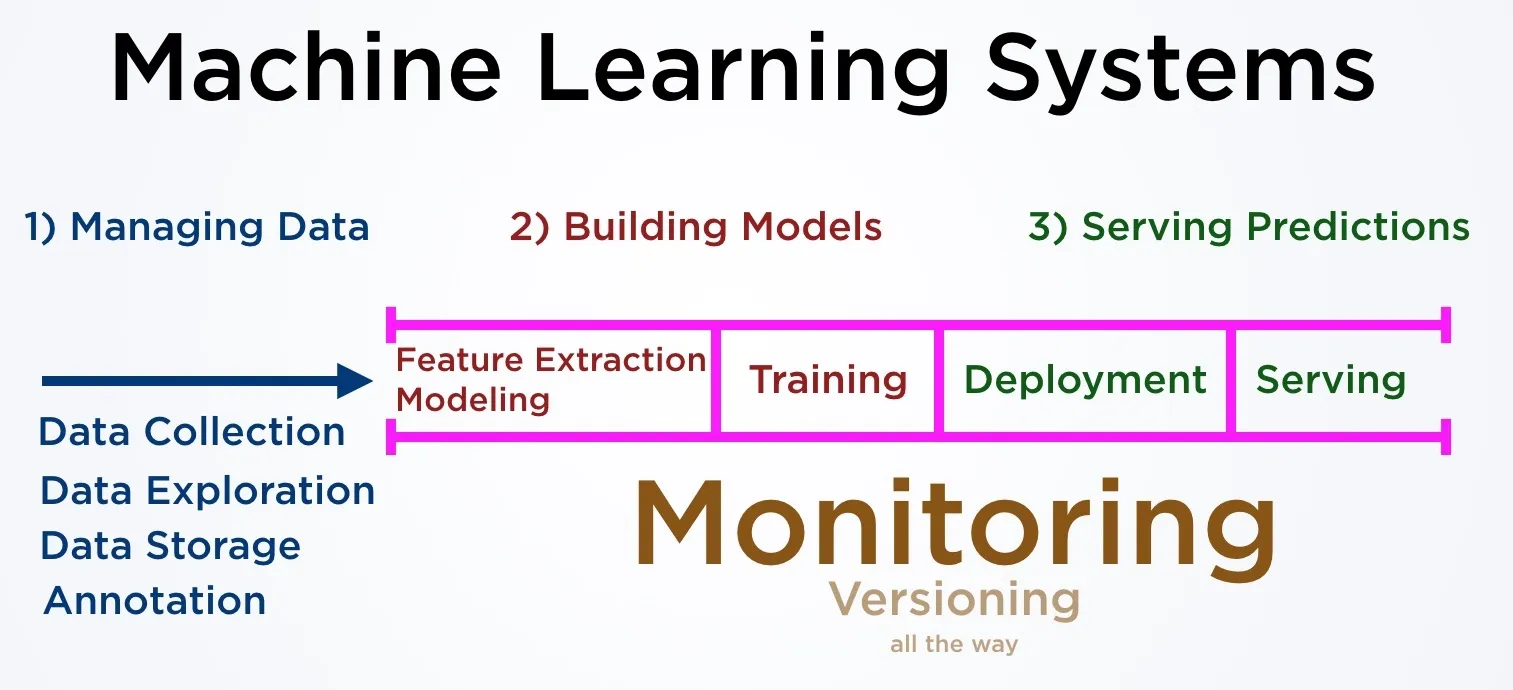

Machine learning platforms are services that support organizations developing machine learning solutions and there are multiple companies developing such tool. What are the best platforms and how to choose the right one for you? These platforms focus on one or more components of a machine learning system: 1) managing data, 2) building models, and 3) serving predictions.

As the terminology used with various machine learning offerings can be quite convoluted, let’s start by untwining the high-level terms first.

Simply put, you can think of analytics platforms, data science platforms, machine learning platforms, and deep learning platforms as synonyms. The main thing that differs is the core focus; deep learning platforms offer GPUs for neural network training while data science platforms focus more on traditional data science like decision trees and linear regressions. Commonly, deep learning platforms also support more traditional machine learning approaches, and data science platforms offer GPUs for deep learning. The specific terminology is more of a marketing thing.

Machine Learning as a Service (MLaaS) is a separate family of solutions, which we will cover later in our blog, but in a nutshell MLaaS providers offer API-based microservices with pre-trained models and pre-defined algorithms such as Google Cloud Vision API or Amazon’s Rekognition services. Although they do frequently manifest as SaaS-like platforms, I don’t recognize them as machine learning platforms.

I can imagine it all is an enormous, confusing ball of jargon yarn even for the most educated people, especially as most of the terms haven’t been generalized yet, making marketing materials patchwork of gobbledygook.

Original photo by Philip Estrada on Unsplash

I’m only going to talk about licensable products and services that have hosted alternatives, which will exclude open source tools like JupyterHub, Kubeflow and Spark. I do this to keep the discussion on solutions that you can pick up and start using right away, as most of the open source tooling requires a lot of configuration and glue code to set up the whole pipeline.

All of the previously mentioned ML platform types can be grouped into seven broad categories based on their core focus.

Business Intelligence data science platforms analyze common business information – we are talking about market research, website visitor information, sales numbers, financial records or anything that most companies record already. Point-and-click interfaces and predefined algorithms are the main common feature with all of these platforms. Easy to use, expensive to buy, favor domain expertise over data science and assume deeper partnership with the service provider.

Data Management data science platforms focus on storing and querying your data. They are your best bet if you are e.g. proficient in writing Spark jobs but don’t have the in-house expertise or capacity to maintain big data clusters. The interface to access is an abstraction layer lower than most of the other categories but still higher than infrastructure focused platforms.

Digitalization data science platforms focus on digitalization of manufacturing or other more traditional companies by data automation, usually involving predictive maintenance, productivity bottleneck detection, and uptime predictions. The type of the analyzed data is domain specific, e.g., machine sensor information or vehicle fuel usage.

Infrastructure data science platforms feel more like IaaS providers than PaaS or SaaS. This category is in many ways the opposite of business intelligence platforms, requiring a lot of additional glue code to get your machine learning system going. They are ideal for organizations requiring highly customized solutions.

Lifecycle Management platforms focus on the projects and workflow to build machine learning solutions. You define the problem scope, acquire/explore/transform the related data, create/validate/optimize solution hypotheses by modeling and finally deploy/version/monitor the prediction-giving model. These are the most full-fledged end-to-end services that require only a modest amount of glue code while not sacrificing too much extensibility.

Notebook hosting platforms focus on offering Jupyter notebooks or RStudio workspaces for exploratory data analysis. These are naturally the first places to start as an individual data scientist, but shared notebooks can cause compounding technical debt to your machine learning system if they remain your primary way of versioning and delivering machine learning code.

Record-keeping platforms focus on visualizing machine learning pipeline steps and keeping history on what each artifact, like a model, consists of. These platforms rarely actually run any code, they mainly work as an add-on that plugs in to get reporting rolling. Most other platforms have similar feature built-in, but there are various situations where a custom machine learning system works excellently, but extra record-keeping wouldn’t hurt.

Note that these categories aren’t exclusive. For example, business intelligence platforms can include handing of big data, and they frequently do, but the categorization helps to find the core focus of the platform compared to the other services. If you know the service core focus, you’ll understand if you are an ideal customer for them or not.

| Service/Provider | Category | Focus Areas |

|---|---|---|

| Azure ML Studio | Business Intelligence | point-n-click graphs |

| Magellan Blocks | Business Intelligence | point-n-click graphs |

| KNIME | Business Intelligence | point-n-click graphs |

| SAP Predictive Analytics | Business Intelligence | point-n-click, automated analytics on SAP HANA data |

| Alteryx | Business Intelligence | point-n-click, dashboards |

| DataRobot | Business Intelligence | point-n-click, dashboards |

| JASK ASOC Platform | Business Intelligence | point-n-click, dashboards |

| Dataiku | Business Intelligence | point-n-click, dashboards, hosted notebooks |

| Ayasdi | Business Intelligence | point-n-click, dashboards, topological data analysis |

| RapidMiner | Business Intelligence | point-n-click, data collection |

| Meeshkan | Business Intelligence | point-n-click, data streams to predictions |

| Teradata Analytics Platform | Business Intelligence | point-n-click, hosted notebooks |

| BigML | Business Intelligence | point-n-click, model visualizations |

| SAS Platform | Business Intelligence | point-n-click, model visualizations |

| Angross | Business Intelligence | point-n-click, numeric big data e.g. banking |

| H2O.ai | Business Intelligence | proprietary code, big data, spark integration |

| Pachyderm | Data Management | container-based, data pipelines, collaboration |

| MapR | Data Management | hadoop-based, performance, customization |

| Cloudera | Data Management | hadoop-based, point-n-click |

| Hortonworks | Data Management | hadoop-based, Windows |

| Sentenai | Data Management | real-time data, hosted notebooks |

| Immuta | Data Management | sharing your data outside |

| Databricks | Data Management | spark-based, DIY |

| MAANA | Digitalization | point-n-click, industry productivity |

| Uptake | Digitalization | point-n-click, industry uptime |

| Contiamo | Digitalization | point-n-click, process automation |

| Spell | Infrastructure | deep learning |

| Google ML Engine | Infrastructure | deep learning (TensorFlow), DIY |

| Bitfusion | Infrastructure | deep learning, DIY |

| Seldon | Infrastructure | deployment, kubernetes, DIY or hosted |

| Yhat | Infrastructure | deployment, model versioning |

| cnvrg.io | Lifecycle Management | deep learning, collaboration |

| Valohai | Lifecycle Management | deep learning, collaboration, optimization, deployment |

| FloydHub | Lifecycle Management | deep learning, exploration, collaboration |

| Onepanel | Lifecycle Management | deep learning, exploration, collaboration |

| RiseML | Lifecycle Management | deep learning, kubernetes, optimization |

| Neptune | Lifecycle Management | deep learning, visualization, hosted notebooks |

| Clusterone | Lifecycle Management | distributed learning, kubernetes, collaboration |

| SherlockML | Lifecycle Management | exploration, collaboration, deployment |

| Bonsai | Lifecycle Management | reinforcement learning |

| MissingLink | Lifecycle Management | sharing datasets, public projects |

| Azure Notebooks | Notebook Hosting | exploration |

| IBM Watson Studio | Notebook Hosting | exploration |

| Domino Data Lab | Notebook Hosting | exploration, collaboration, modeling |

| Anaconda Enterprise | Notebook Hosting | exploration, collaboration, open source |

| Gigantum | Notebook Hosting | exploration, collaboration, self-hosted |

| AWS SageMaker | Notebook Hosting | exploration, deployment |

| Kaggle Kernels | Notebook Hosting | exploration, sharing notebooks, sharing datasets |

| Comet | Record-keeping | visualization, record-keeping |

I originally wanted to document the base per-seat-per-month licensing fees of the offerings, but unfortunately, most of the companies don’t disclose pricing models openly on their websites. Recording an estimated monthly price would require booking a call with each of the companies, which makes cost comparison tedious. (Mental note: make pricing as transparent and easy to find as possible.)

So which data science platform should you choose? I advice to look at your current machine learning model lifecycle and note down which parts are the most time consuming or lacking.

- Do you have reliable data preparation and training but history information and visualizations are not being recorded? Go with Comet.

- Do you need to heavily subsample your dataset for model training because downloading ten terabytes from S3 on your training machine is not feasible? Book a call with MapR.

- Do you have nothing or just a few shell scripts that control a small cluster of virtual machines that you SSH into to run data preparation, model training, and deployment? Pick one of the full lifecycle management packages like Valohai.

If you feel that I’ve missed an important platform or that some of the information is inaccurate, drop me an email at ruksi@valohai.com and I’ll make adjustments ;)