There’s a new wave of automation being enabled by the combination of machine learning and smart devices. ML-enabled devices will have a profound impact on our daily lives from smart fridges to cashierless checkout and self-driving cars. With the complexity of use cases and amount of devices increasing, we’ll have to adopt new strategies to deploy those ML capabilities to the users and manage them.

In this article, we will examine the benefits of running inference on edge devices, the key challenges and how MLOps practices can mitigate those.

Understanding the key concepts

Before we go into the benefits of MLOps for IoT and edge we need to make sure that we are on the same page by going through some basic concepts. There are only four of those, so fear not.

IoT, or the Internet of Things, is a network of devices with sensors that can collect, process and exchange data with other devices over the Internet or any other type of connection between them.

AIoT, or Artificial Intelligence of Things, is the combination of artificial intelligence technologies with the IoT infrastructure. It allows for a more efficient IoT operations, advanced data analytics, and improved human-machine interactions.

Edge Computing is a “distributed computing framework that brings enterprise applications closer to data sources such as IoT devices or local edge servers. This leads to faster insights, improved response times, and better bandwidth availability.” (IBM) It also makes the application of machine learning algorithms at edge possible.

MLOps is a practice that aims to make developing and maintaining production machine learning seamless and efficient. If you aren’t yet familiar with the term, you can read more in our MLOps guide.

Background overview

As more organizations are adopting ML, the need for model management and operations increased drastically and gave birth to MLOps. On the side of this is the surge in the internet of things. According to Statista, the global internet of things spending is projected to reach 1.1 trillion US dollars by 2023. In addition, the number of active IoT-connected devices is projected to reach 30.9 billion by 2025.

Cloud computing has always helped in facilitating seamless communication between IoT devices as it enables APIs to interact between connected and smart devices. However, one major disadvantage of cloud computing is its latency as it cannot process data in real-time. Therefore, as the world continues to see a drastic increase in the number of active IoT-connected devices, there is a need for better technology.

This gave birth to edge computing which can be used to process time-sensitive data. It is also a better alternative for remote locations where there is limited or no connectivity. However, as pointed out in Emmanuel Raj’s thesis from 2020, with the growing potential of IoT and edge we face a major computing challenge.

For example, how do you do ML on edge devices at scale to manage and monitor ML models? How do you enable CI/CD? How do you secure devices and the communication between them? How to guarantee the efficiency of the system?

MLOps for IoT and Edge will differ significantly from more traditional use cases because there aren’t as many established patterns.

The benefits of machine learning at the edge

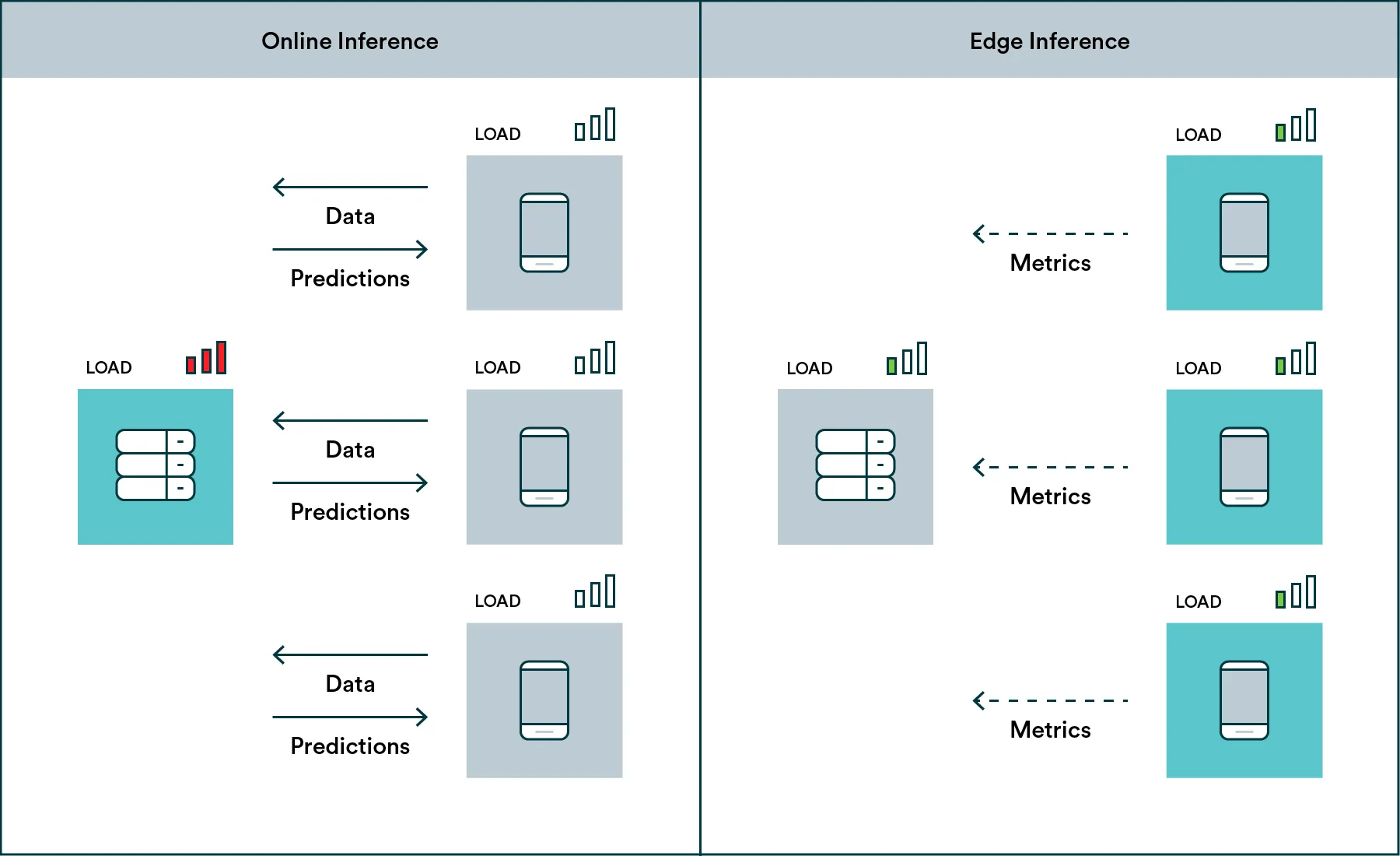

Most MLOps literature and solutions are focused on online inference. In other words, running a model on the cloud and having end-user applications communicate with the model through APIs. In many use case however moving the algorithm closer to the actual end-user is very beneficial and thus edge inference shouldn’t be overlooked.

Moving the model to the device, whether it’s a mobile phone or a device like NVIDIA Jetson, unlocks high scalability, privacy, sustainability, affordability, and adaptability. These are all aspects that can be very difficult to address via online inference. This move also makes the IoT devices themselves more reliable because they’ll be able to function even in limited connectivity, which can be critical for use cases such as medical or security devices.

With edge inference, the model runs on the devices at the location and thus scale is handled by devices themselves.

An ML system that runs inference on the device itself will have unique benefits:

-

The system will scale automatically because each new device will handle its own workload. This can also help with being more adaptable to changing circumstances and more cost-effective.

-

The system will more easily address privacy concerns because the data doesn’t have to leave the end-users device.

-

Each edge device can make real-time decisions without the added latency.

-

Each edge device can make decisions even without a connection to a central server.

-

Each edge device can even run its’ own version of the trained model to address the specific needs of the environment.

An ML solution that relies on edge devices can take many shapes. For example, it can be your phone running the face recognition model specifically trained to recognize you. Alternatively, it can be a local computer that connects to all the surveillance cameras on site and alerts for any suspicious activity. Both will benefit from the bullet points above but will be quite different to maintain and manage which brings us to the next logical point: the challenges.

The challenges of edge machine learning and how MLOps can help

There is a logical reason why most MLOps literature focuses on online inference. It’s simple — from a management point of view. You maintain a model that translates into a single API endpoint that can be updated and monitored.

In edge ML, most use cases are unique snowflakes and processes can get quite complex. But proper implementation of MLOps can certainly make things easier. Let’s look at a few of the challenges:

-

Testing is hard. Edge devices come in many different shapes and sizes. Take for example the Android ecosystem, there is a large variance in the compute power these devices have and it’ll be hard to test that your model is efficient enough for all devices. However, a robust machine learning pipeline could include various tests to address model performance.

-

Deployment is hard. Updating a single endpoint is trivial. Updating 1,000s of devices is much more tricky. In most cases, you’ll want to integrate your ML pipeline and model store with other tools that take care of the updating the edge devices. For example, your application CI/CD will fetch the latest model from your model store.

-

Monitoring is hard. With online inference, you collect metrics from that single endpoint, but with edge ML, you’ll need instead have each device report back and you still may not have full visibility to environment the model is operating in. You’ll want to design your monitoring system in a way that it can receive data from many sources and perhaps these sources only report back occasionally.

-

Data collection is hard. Privacy comes at a cost because unlike with online inference, you don’t actually have direct access to the “real-world” data. Building continuous loops to collect data from edge devices can be a complex design challenge. You’ll want to consider mechanisms that allow you to collect data when your model fails (for example, user can opt to send a picture when the object recognition model doesn’t work). These can then be labeled and added to the training dataset in your ML pipeline.

The primary goal of MLOps for IoT and edge is to relieve these challenges. Continuous loops are more difficult to set up in the realm of edge inference. In a usual set up, your model is trained on the cloud, then picked up by a CI/CD system and deployed to a device as a part of an application. The application will run inference on the device and report back metrics and data in batches back to the cloud where you can close the loop, retrain and improve your model.

Your solution

As mentioned before, each edge ML case is still quite unique and there isn’t a single pattern that works for all. Likely your end solution will contain your own custom code and off-the-shelf products. The priority should be on mapping out all the different components required to form a continuous loop and figuring out how all the different components talk to each other. We are huge proponents of open APIs and the Valohai MLOps platform is built API-first so all functionalities can be accessed through code and tools can be integrated seamlessly.

For us, MLOps isn’t just something for online use case. We think the same best practices of building continuous loops and automating them as much as possible should be de facto for edge too. If you think that taking your organization closer to the edge is something you would like to do, we will be happy to discuss your case and consult you on the possible solutions. So, be sure to book a call and talk to us.