Most small teams begin operations by managing tasks manually, including training machine learning models, cleaning data, deploying models and tracking results. However, as the team and its services grow, the number of repetitive tasks also increases. The interdependencies among these tasks will also rise. That is, the pipeline of tasks becomes a network with dynamic branches.

This network can be modeled as a Directed Acyclic Graph (DAG). Workflow orchestration tools help you to define DAGs for your tasks and how they depend on each other. Afterward, the tool will execute the tasks in the correct order and on schedule, perform a retrial for any failed task, monitor progress and alert you on any failure.

No doubt, orchestration tools are magic! But there are a lot of them out there and this poses a challenge to teams who sometimes find it difficult on which tool to choose.

In this article, we will unravel the similarities and core differences between Kubeflow and Airflow. Before we start though, let’s preface this by saying that Kubeflow and Airflow are built for very different purposes and they shouldn’t be compared as matching alternatives.

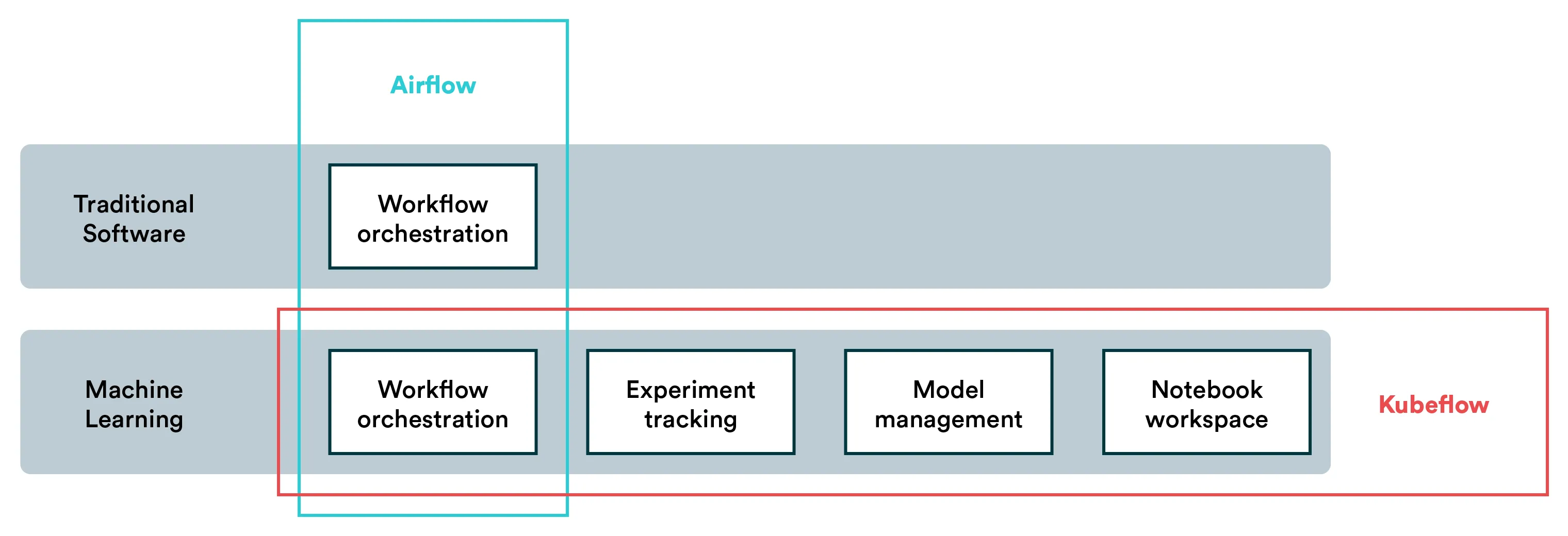

The above visualizes how Airflow focuses on a single function while Kubeflow focuses on a use case.

Components of Kubeflow

Kubeflow is a free and open-source ML platform that allows you to use ML pipelines to orchestrate complicated workflows running on Kubernetes. This solution was based on Google’s method of deploying TensorFlow models, that is, TensorFlow Extended.

The logical components that make up Kubeflow include the following:

-

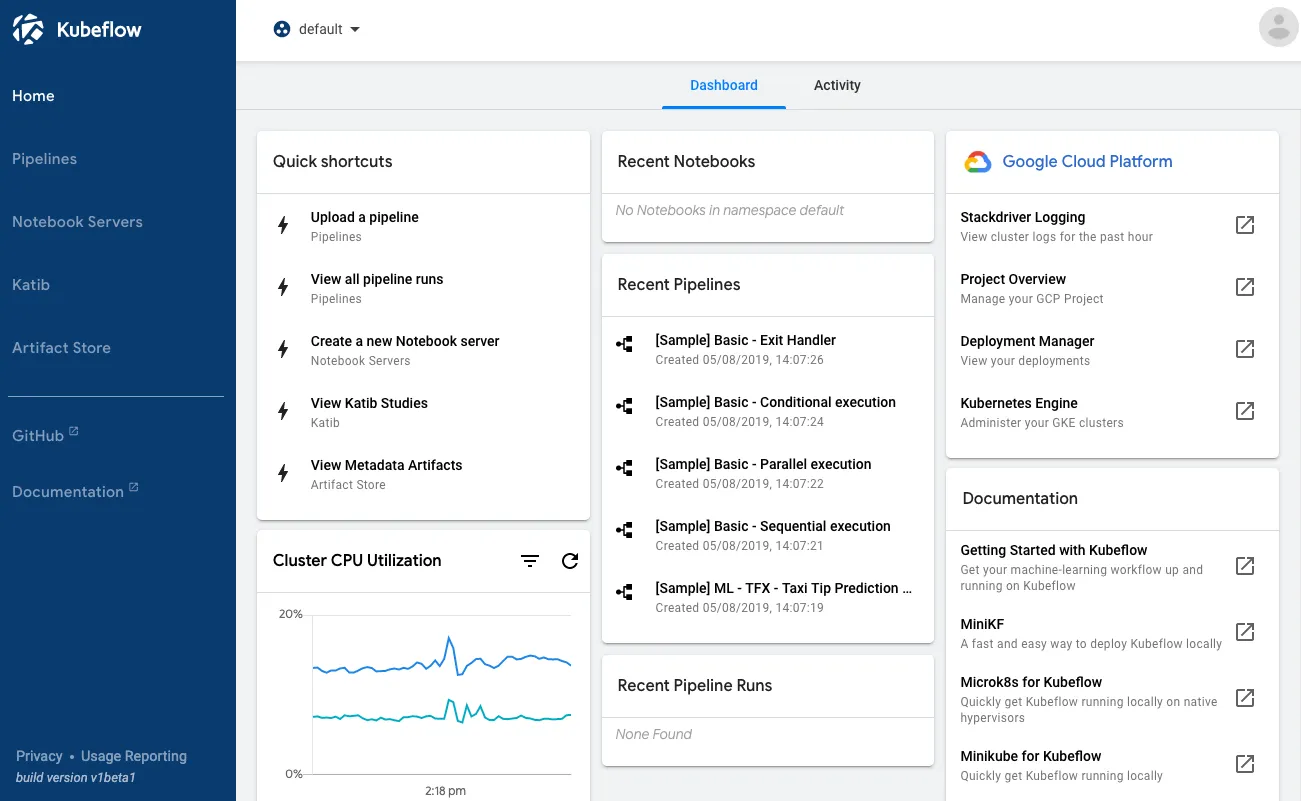

Kubeflow Pipelines: Empower you to build and deploy portable, scalable machine learning workflows based on Docker containers. It consists of a user interface to manage jobs, an engine to schedule multi-step ML workflows, an SDK to define and manipulate pipelines, and notebooks to interact with the system via SDK.

-

KFServing: Enables serverless inferencing on Kubernetes. It also provides performant and high abstraction interfaces for ML frameworks like PyTorch, TensorFlow, scikit-learn, and XGBoost.

-

Multi-tenancy: Simplifies user operations to allow each user to view and edit only the Kubeflow components and model artifacts in their configuration. Key concepts under this Kubeflow’s multi-user isolation include authentication, authorization, administrator, user and profile.

-

Training Operators: Enables you to train ML models through operators. For instance, it provides Tensorflow training (TFJob) that runs TensorFlow model training on Kubernetes, PyTorchJob for Pytorch model training, etc.

-

Notebooks: Kubeflow deployment provides services for managing and spawning Jupyter notebooks. Each Kubeflow deployment can include multiple notebook servers and each notebook server can include multiple notebooks.

Components of Airflow

Airflow is an open-source workflow management platform created by Airbnb in 2014 to programmatically author, monitor and schedule the firm’s growing workflows.

Some of the components of Airflow include the following:

-

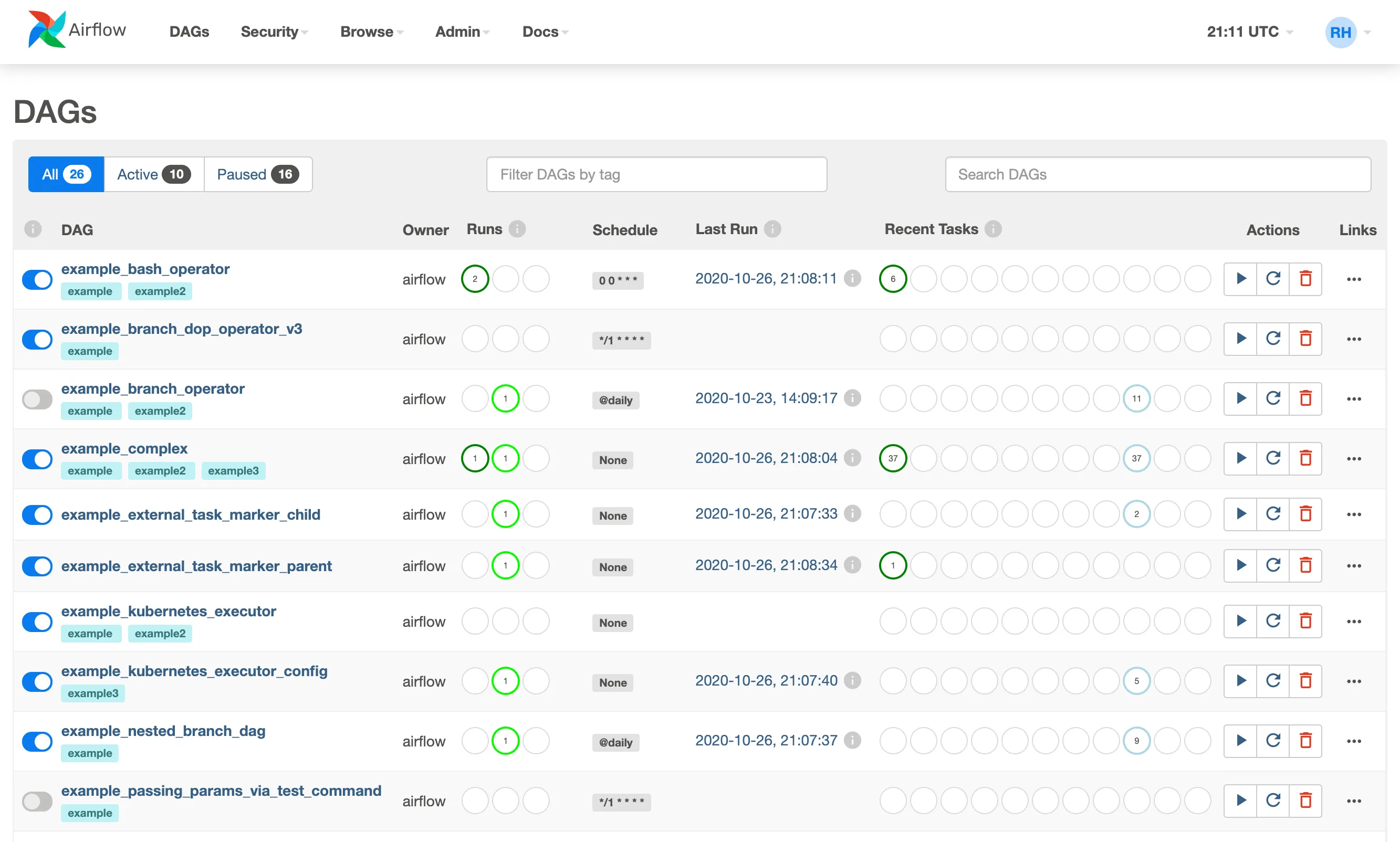

Scheduler: Monitors tasks and DAGs, triggers scheduled workflows, and submits tasks to the executor to run. It is built to run as a persistent service in the Airflow production environment.

-

Executors: These are mechanisms that run task instances; they practically run everything in the scheduler. Executors have a common API and you can swap them based on your installation requirements. You can only have one executor configured per time.

-

Webserver: A user interface that displays the status of your jobs and allows you to view, trigger, and debug DAGs and tasks. It also helps you to interact with the database, read logs from the remote file store.

-

Metadata database: The metadata database is used by the executor, webserver, and scheduler to store state.

It’s worth mentioning that because Airflow is solely focused on a single purpose, the components listed here are much lower level than those listed for Kubeflow.

Similarities between Kubeflow and Airflow

Kubeflow and Airflow share some things in common even as they have many differences. Similarities between the two tools include:

-

Both Kubeflow and Airflow can be used to orchestrate machine learning pipelines, but with very different approaches. More on that in the next section.

-

Both Kubeflow and Airflow are open-source tools. That is, they are easily accessible to anyone, from anywhere. The two also have great communities and active users from whom you can learn and share ideas. However, it should be noted that Airflow has a wider community than Kubeflow.

-

The two platforms have a user interface. In Kubeflow, the interface is called central dashboard and it provides easy access to all Kubeflow components deployed in your cluster. In Airflow, the user interface provides a full overview of status and logs of all tasks, both completed and ongoing

-

Both of them utilize Python. For instance, in Airflow, you can use Python features to create workflows. You can also define tasks using Python in Kubeflow.

Differences between Kubeflow and Airflow

A core difference between Kubeflow and Airflow lies in their purpose and origination.

Kubeflow was created by Google to organize their internal machine learning exploration and productization, while Airflow was built by Airbnb to automate any software workflows.

Below are critical differences that mostly stem from differences in purpose.

-

Airflow is purely a pipeline orchestration platform but Kubeflow can do much more than orchestration. As a matter of fact, Kubeflow focuses majorly on machine learning tasks, like experiment tracking. In Kubeflow, an experiment is a workspace that empowers you to make different configurations of your pipelines.

-

Unlike Kubeflow, Airflow does not offer any best practices for ML but rather requires you to implement everything yourself. This is no surprise because Airflow was not originally intended for machine learning pipelines though it is being used for it today. As a matter of fact, while Airflow is popularly classified as workflow manager, Kubeflow is classified as an ML toolkit for Kubernetes.

-

There are far more engineers and companies using Airflow than Kubeflow. For instance, Airflow has more forks and stars on Github than Kubeflow. Also, Airflow has been listed in more company and developer stacks than Kubeflow. Some popular companies that use Airflow include Slack, Airbnb, and 9GAG. The wide adoption of Airflow also means that users can get easy support from the community.

-

Kubeflow is built to run exclusively on Kubernetes. It works by allowing you to arrange your ML components on Kubernetes. On the other hand, you DO NOT need Kubernetes to work with Airflow. It is important to point out, however, that if you wish to run Airflow on Kubernetes, you can do it through the Kubernetes Airflow Operator.

Summary

Kubeflow and Airflow are comparable in that with both you can build and orchestrate DAGs and not much else.

Why is this a common comparison then? Oftentimes, engineers (not specifically ML engineers) are tasked with supporting data scientists and automating machine learning workflows, and it’s easiest to stick with the tools you know (i.e. Airflow).

If you need a platform to run a variety of tasks (and maybe you are just automating a single model workflow), Airflow is probably the more viable option as it is a generic task orchestration platform.

However, if you are looking to adopt machine learning best practices and to implement an ML-specific infrastructure solution, then Kubeflow is the better alternative of the two. Bear in mind that you’ll probably be looking at a significantly longer timeframe and you’ll need to brush up on Kubernetes if you haven’t already.

Valohai as an Alternative for Kubeflow and Airflow

[CAUTION: Opinions ahead] As mentioned above, machine learning as a use case has much broader needs than pipeline orchestration. Albeit important, building and automating a machine learning pipeline is often a small portion of the work data scientists and machine learning engineers do.

You may be looking at two options for building out your MLOps stack:

-

Implementing pipelines with Airflow and supporting other aspects of data science work with tools like MLflow for experiment tracking and BentoML for model deployment.

-

Adopting the entire tool stack with Kubeflow (and possibly adopting Kubernetes for the first time too).

Now, I’m not going to argue against using tools like Airflow to build a single workflow. That makes total sense. But if your objective is to build a full-fledged MLOps stack, both options laid out above are hefty investments in terms of time and effort. The third option you might not be considering is a managed MLOps platform – namely Valohai.

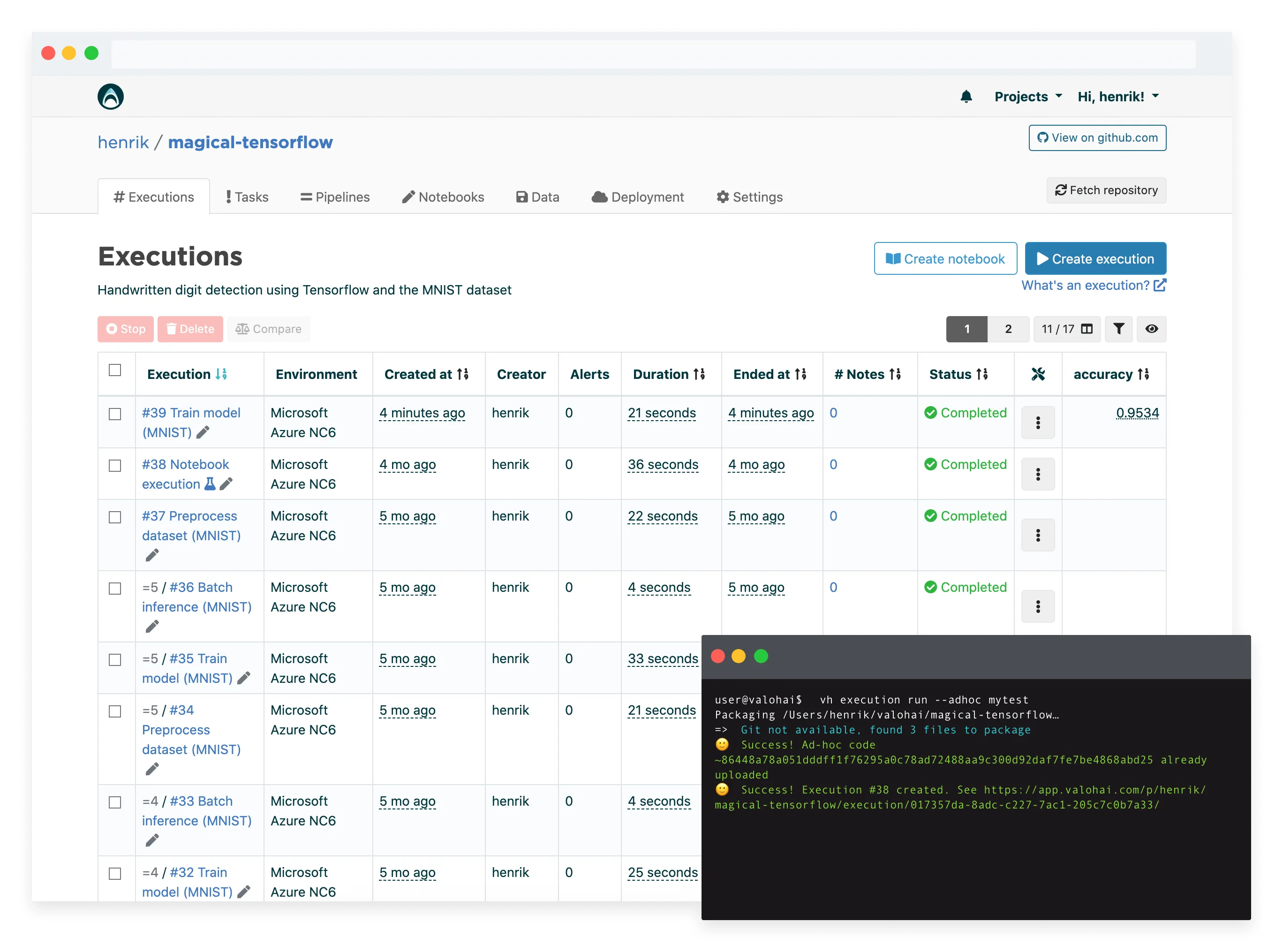

Valohai provides a similar feature set to Kubeflow in a managed service (i.e. you’ll be able to skip the maintenance, the setup and user support). In addition, Valohai takes a page from Airflow’s book and stays more technology-agnostic than Kubeflow. You can run any code, any frameworks on most popular cloud platforms and on-premise machines – without Kubernetes.

So if you’re looking for an MLOps platform without the resources of a dedicated platforms team, Valohai should be on your list.

If you are interested in learning more, check out:

- Valohai Free Trial

- Valohai Product

- Valohai and Kubeflow Comparison

- MLOps Platform: Build vs Buy

- MLOps Platforms Compared

More Kubeflow comparisons

This article continues our series on common tools teams are comparing for various machine learning tasks. You can check out some of our previous Kubeflow comparison articles:

| Other Kubeflow comparison articles |

|---|

| Kubeflow and MLflow |

| Kubeflow and Airflow |

| Kubeflow and Metaflow |

| Kubeflow and Databricks |

| Kubeflow and SageMaker |

| Kubeflow and Argo |