Ville Tuulos, machine learning infrastructure architect, was the first to publicly dissect Netflix’s Machine Learning infrastructure at QCon in November 2018 in San Francisco. If you haven’t seen the talk yet, read the summary of his talk here! All the pictures used here, are from Ville’s presentation.

The full talk is 49 minutes long and you can watch it in its entirety on YouTube .

From a scattered toolset to a coherent machine learning platform

Ville starts by comparing Machine Learning Infrastructure to an online store and how building one was truly a technical problem twenty years ago. Back then you needed to build the whole online shop yourself starting from setting up the servers because the cloud did not exist. New platforms and technologies have since emerged that allow basically anyone to build up an online store and nowadays it is more about knowing the customers than setting up the webshop.

Whilst machine learning infrastructure is a timely topic today, in the near future companies do not have to think about setting up the whole infrastructure from scratch. Soon there will be clear standards and tooling in place to start solving the real business problems immediately. We believe that the build vs. buy debate will vanish in ten years or less. Building your own ML infrastructure at this point is like building your own GitHub – certainly possible, but your time and resources are undoubtedly better spent elsewhere.

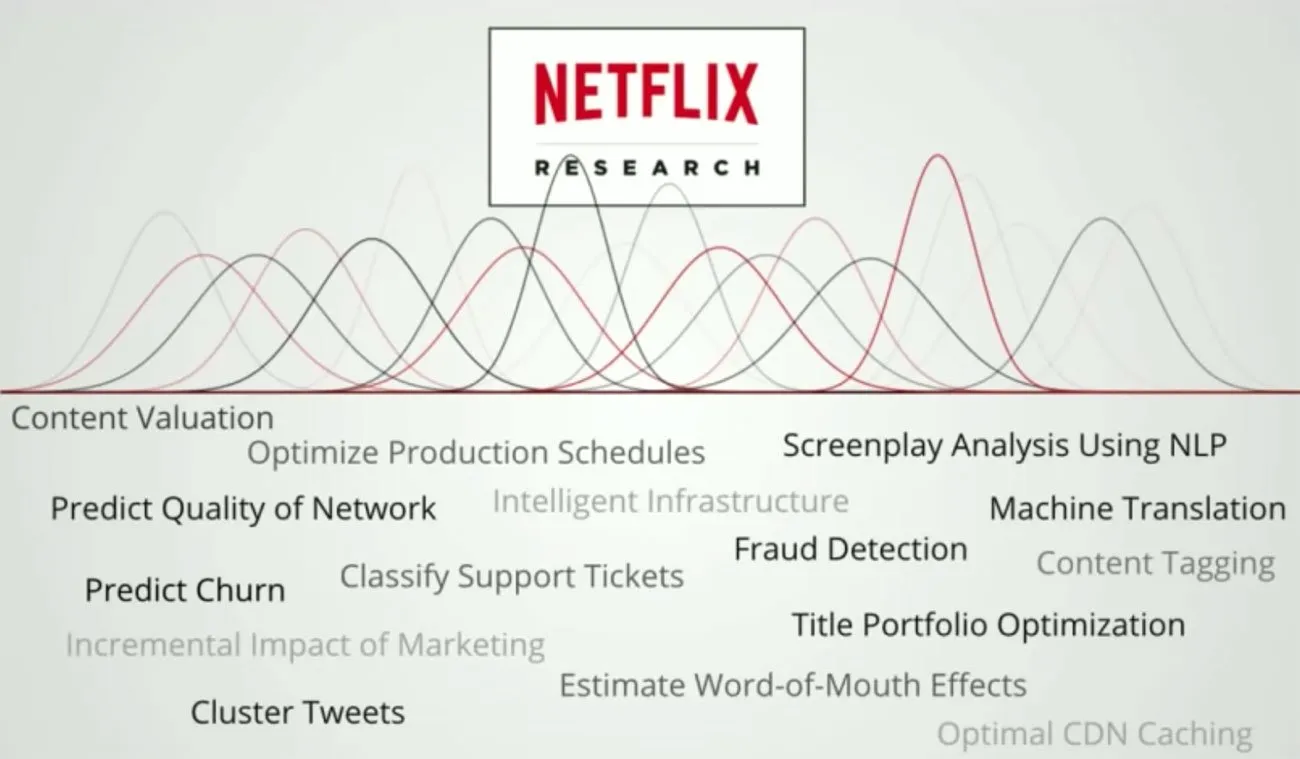

Why machine learning infrastructure is important for Netflix

Couple years back machine learning infrastructure was a technical problem at Netflix. Nowadays the machine learning development is coming more human centric and their infrastructure development is guided by two key principles:

- Make data scientists more productive

- Make it easier to apply ML to different business problems

While it might seem like a good idea to start with one technology, you most certainly do not want to tie up the whole infrastructure to work with for example only one language or framework.

At Netflix, they are leveraging machine learning across the whole company. Data scientists building machine vision models with Python and TensorFlow are not the best people to build revenue models or other numeric based problems with R. Hence you need to hire data scientists specialized in each problem domain. But often the case is that the person with domain expertise is not the DevOps specialist who is also mastering cloud infrastructure setup, and that is when a well-oiled machine learning infrastructure comes into play.

To truly foster machine learning across the whole organization you want to have a technology-agnostic solution. As the business scale and problems become more diverse you can be certain that there will be different kinds of issues and endless amounts of solutions to them.

Ville admits that, in the future, there will be some kind of standard solution that lets data scientists apply machine learning to very different kinds of problems. However, at Netflix they wanted to be ahead of the competition and solve their customers’ problems with machine learning already today. Hence they needed to build the infrastructure in order to do that.

What kind of problems should a machine learning infrastructure solve?

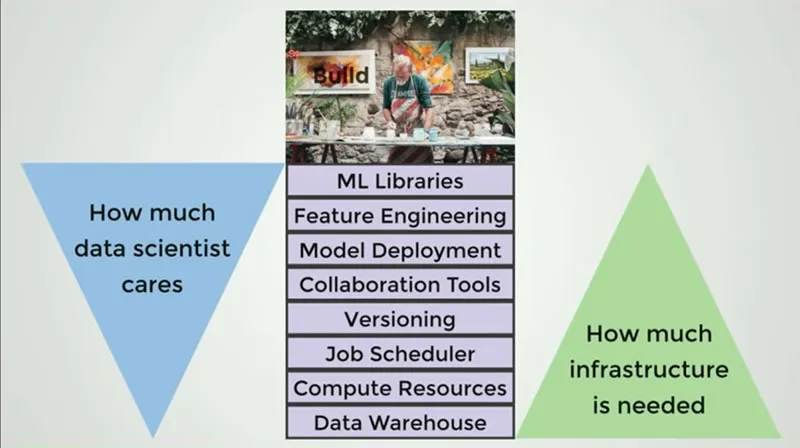

According to Ville, the machine learning workflow can be divided into these eight building blocks:

- Data Warehouse , data scientists should have access to data but they should not need to setup and maintain a data warehouse

- Computer resources , data scientists do not care where the CPU/CPU/TPU resources come from

- Job scheduler , a system to orchestrate the jobs and run certain trainings on a daily basis

- Versioning , all responsible data scientist should version their notebooks, experiments, and data. Currently no-one does it because it isn’t easy enough.

- Collaboration tools , well, in general people think that collaboration and knowledge sharing is good.

- Deployment , data scientists might have never deployed anything. Especially if they come from a research background. Deployment is a trivial task and when you have a solution that automatically pushes models into production, people usually are pretty ok with that. Each and everyone on the machine learning team doesn’t have to have a detailed knowledge on how deployment happens.

- Feature engineering , data scientists should understand the data thoroughly so they should use their time in this stage of the development workflow.

- Machine learning libraries , companies should not provide certain libraries. When the number of machine learning applications inside a company grows, you want to let the data scientist choose their own ways of working.

In the middle of the picture above there are stages that should be considered while building machine learning infrastructure and the arrows on Ville Tuulos’s side illustrates that, in general, the more infrastructure is needed to perform a certain step the less data scientists care about how it is done. Data scientists should focus their creative energy more on data and libraries than on storing and setting up computing instances.

The infrastructure team should care about things that data scientists should not care about and these are exactly the same issues that Valohai takes care for our customers. There is no need to hire a separate infrastructure team when you have third party solution like Valohai by your side.

Deployment in 7 days from starting the project

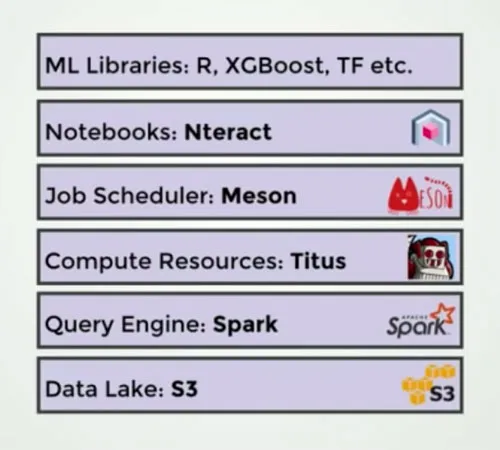

In the talk, Ville describes how they started a project to analyse sentiments in tweets written about Netflix series. Even though Netflix had different tools that basically allowed to execute each step in the model building, nothing was connecting these steps and as Ville put it: “ Nothing was easy for data scientists. ”

The hard questions, however, arose only after the model was in production. Questions such as:

- How should we monitor the models?

- How do we run and access data at scale?

- How do we schedule the model to update daily?

- How do we debug a failed run?

- How do we make this faster?

- How do we iterate on a new version without breaking the production version?

- How do we let another data scientist iterate on her version of the model safely?

They checked the code written for the project and found out that 60% of the code was related to infrastructure and only 40% of the code was data science. After having the first experiences in building the production scale ML solutions and pondering over the questions above, Ville and others at Netflix felt that they were missing a piece of infrastructure.

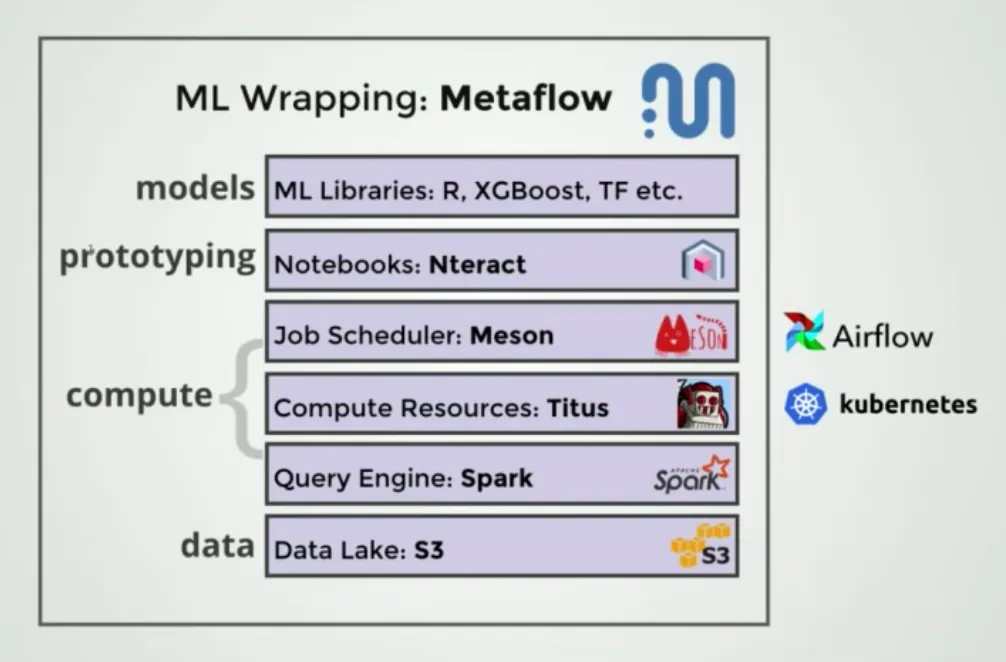

Identifying this burden cost by the lack of surrounding infrastructure lead Netflix to create their own machine learning platform MetaFlow that wraps inside it different tools needed at different development steps.

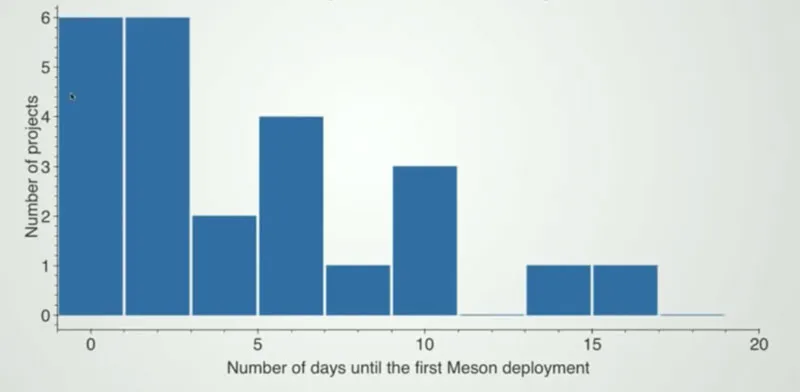

Before building the supportive infrastructure, from starting the project to deployment took four months. Today it takes 7 days (median). Hence working infrastructure allows for much faster iteration and is a significant competitive advantage for Netflix.

What did you learn?

What toolchain should your team be using to bring models to production faster? It is not enough to have separate tools for each different step in machine learning development workflow, but the best option is to have a standardized platform that supports all tools, languages and frameworks you’ll need. Valohai Deep Learning Platform is a managed service that includes all of this. Everything from automatic version control to easy machine orchestration and management for ML development pipelines. For tips on how to build your own ML infrastructure, check out our managed vs. open-source MLOps platform guide or ask for a demo to learn what Valohai can do for you.

Interested in reading more about Netflix? Check out our interview with Ville Tuulos on why Netflix is building what they do!